BIGHUG에서 주최한 시애틀 권역 한인 소상공인을 위한 ChatGPT 활용법에 강사로 참여하면서 만들었던 PPT 가 있었는데요.

그 PPT를 기반으로 유투브 클립을 만들고 있는데 오늘은 두번째 클립을 만들었습니다.

AI 가 얼마나 빨리 변하는지 한달 밖에 안 됐는데 하다 보니까 PPT 내용을 그대로 못 쓰고 비디오 만들면서 계속 업데이트 해야만 했네요.

오늘 내용은 ChatGPT SignUP하면 유리한 점, AI란 무엇인가 그리고 OpenAI의 ChatGPT-4o, Google의 Gemini 그리고 Microsoft의 Copilot 3개를 비교하면서 각각의 장점과 개성들을 파악해 보는 시간을 마련했습니다.

이번에는 OpenAI 의 ChatGPT-4o와 Google의 Gemini 그리고 Microsoft의 Copilot을 모두 사용하면서 비교를 해 봤습니요.

AI 별로 각각 특성과 개성이 있어요.

어떤

세가지를 모두 사용하면서 서로 답변을 비교하고 사용을 해야 할 것 같아요.

챗지피티와 코파일럿은 이제 이미지 생성까지 해 줘서 더 다양하게 이용할 수 있게 된 것 같습니다.

이런 내용들을 다룬 오늘의 유투브 클립 링크가 아래 있습니다.

그리고 아래로 가면 세미나에서 사용했던 presentation 내용을 보실 수 있습니다.

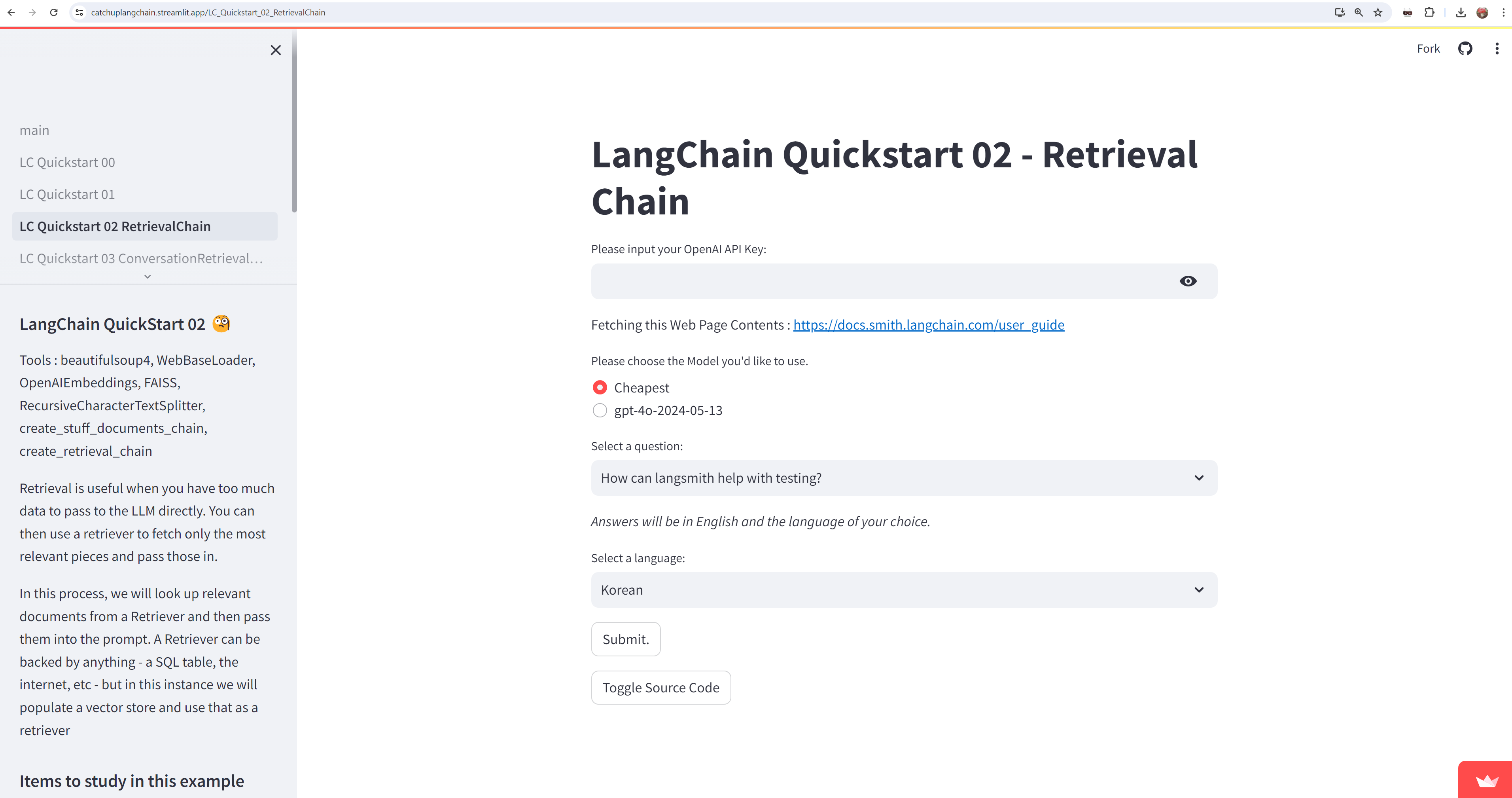

https://catchupai4sb.streamlit.app/

소상공인을 위한 AI

This app was built in Streamlit! Check it out and visit https://streamlit.io for more awesome community apps. 🎈

catchupai4sb.streamlit.app

ChatGPT에게 김치찌개 한식당 이벤트에 사용할 그림을 만들어 달라 그랬더니.....

아주 이쁜 분이 김치찌개를 들고 소개하는 그림을 그려 주네요.

거기다 한복까지 입히고... 김치찌개도 너무 먹음직 스럽게 잘 그렸구요.

원근감을 살려서 너무 가깝거나 먼 곳은 흐리게 표현하고 핵심 부분은 찐하게 표현했습니다.

정말 그림을 잘 그리네요.

이번 편에서는 ChatGPT 승입니다. Gemini와 Copilot 보다 여러모로 ChatGPT가 더 우수했습니다.