D2L - Introduction

2023. 10. 9. 01:26 |

https://d2l.ai/chapter_introduction/index.html

1. Introduction — Dive into Deep Learning 1.0.3 documentation

d2l.ai

1. Introduction

Until recently, nearly every computer program that you might have interacted with during an ordinary day was coded up as a rigid set of rules specifying precisely how it should behave. Say that we wanted to write an application to manage an e-commerce platform. After huddling around a whiteboard for a few hours to ponder the problem, we might settle on the broad strokes of a working solution, for example: (i) users interact with the application through an interface running in a web browser or mobile application; (ii) our application interacts with a commercial-grade database engine to keep track of each user’s state and maintain records of historical transactions; and (iii) at the heart of our application, the business logic (you might say, the brains) of our application spells out a set of rules that map every conceivable circumstance to the corresponding action that our program should take.

최근까지 일상적으로 상호 작용할 수 있는 거의 모든 컴퓨터 프로그램은 작동 방식을 정확하게 지정하는 엄격한 규칙 세트로 코딩되었습니다. 전자상거래 플랫폼을 관리하기 위한 애플리케이션을 작성하고 싶다고 가정해 보겠습니다. 문제를 숙고하기 위해 몇 시간 동안 화이트보드 주위에 모인 후 우리는 작업 솔루션의 광범위한 스트로크에 안주할 수 있습니다. 예를 들어: (i) 사용자는 웹 브라우저 또는 모바일 애플리케이션에서 실행되는 인터페이스를 통해 애플리케이션과 상호 작용합니다. (ii) 당사의 애플리케이션은 상용급 데이터베이스 엔진과 상호 작용하여 각 사용자의 상태를 추적하고 과거 거래 기록을 유지합니다. (iii) 애플리케이션의 중심에는 애플리케이션의 비즈니스 로직(브레인이라고 말할 수 있음)이 생각할 수 있는 모든 상황을 프로그램이 취해야 하는 해당 조치에 매핑하는 일련의 규칙을 설명합니다.

To build the brains of our application, we might enumerate all the common events that our program should handle. For example, whenever a customer clicks to add an item to their shopping cart, our program should add an entry to the shopping cart database table, associating that user’s ID with the requested product’s ID. We might then attempt to step through every possible corner case, testing the appropriateness of our rules and making any necessary modifications. What happens if a user initiates a purchase with an empty cart? While few developers ever get it completely right the first time (it might take some test runs to work out the kinks), for the most part we can write such programs and confidently launch them before ever seeing a real customer. Our ability to manually design automated systems that drive functioning products and systems, often in novel situations, is a remarkable cognitive feat. And when you are able to devise solutions that work 100% of the time, you typically should not be worrying about machine learning.

애플리케이션의 두뇌를 구축하기 위해 프로그램이 처리해야 하는 모든 공통 이벤트를 열거할 수 있습니다. 예를 들어 고객이 장바구니에 항목을 추가하기 위해 클릭할 때마다 프로그램은 해당 사용자의 ID를 요청한 제품 ID와 연결하여 장바구니 데이터베이스 테이블에 항목을 추가해야 합니다. 그런 다음 가능한 모든 특수 사례를 검토하여 규칙의 적합성을 테스트하고 필요한 수정을 시도할 수 있습니다. 사용자가 빈 장바구니로 구매를 시작하면 어떻게 되나요? 처음에 완벽하게 제대로 작동하는 개발자는 거의 없지만(문제를 해결하려면 약간의 테스트 실행이 필요할 수 있음) 대부분의 경우 실제 고객을 만나기 전에 이러한 프로그램을 작성하고 자신있게 시작할 수 있습니다. 종종 새로운 상황에서 작동하는 제품과 시스템을 구동하는 자동화 시스템을 수동으로 설계하는 우리의 능력은 놀라운 인지적 위업입니다. 그리고 시대에 맞는 솔루션을 고안할 수 있다면 일반적으로 머신러닝에 대해 걱정할 필요가 없습니다.

Fortunately for the growing community of machine learning scientists, many tasks that we would like to automate do not bend so easily to human ingenuity. Imagine huddling around the whiteboard with the smartest minds you know, but this time you are tackling one of the following problems:

성장하는 기계 학습 과학자 커뮤니티에 다행스럽게도 우리가 자동화하려는 많은 작업은 인간의 독창성에 그렇게 쉽게 구부리지 않습니다. 당신이 알고 있는 가장 똑똑한 사람들이 화이트보드 주위에 모여 있다고 상상해 보십시오. 그러나 이번에는 다음 문제 중 하나를 다루고 있습니다.

- Write a program that predicts tomorrow’s weather given geographic information, satellite images, and a trailing window of past weather.

지리 정보, 위성 이미지, 과거 날씨의 추적 기간을 바탕으로 내일 날씨를 예측하는 프로그램을 작성하세요. - Write a program that takes in a factoid question, expressed in free-form text, and answers it correctly.

자유 형식 텍스트로 표현된 사실적 질문을 받아들여 올바르게 답하는 프로그램을 작성하세요. - Write a program that, given an image, identifies every person depicted in it and draws outlines around each.

이미지가 주어졌을 때 그 안에 묘사된 모든 사람을 식별하고 각 사람 주위에 윤곽선을 그리는 프로그램을 작성하세요. - Write a program that presents users with products that they are likely to enjoy but unlikely, in the natural course of browsing, to encounter.

사용자가 즐길 가능성이 높지만 자연스러운 탐색 과정에서 접할 가능성이 없는 제품을 사용자에게 제공하는 프로그램을 작성하십시오.

For these problems, even elite programmers would struggle to code up solutions from scratch. The reasons can vary. Sometimes the program that we are looking for follows a pattern that changes over time, so there is no fixed right answer! In such cases, any successful solution must adapt gracefully to a changing world. At other times, the relationship (say between pixels, and abstract categories) may be too complicated, requiring thousands or millions of computations and following unknown principles. In the case of image recognition, the precise steps required to perform the task lie beyond our conscious understanding, even though our subconscious cognitive processes execute the task effortlessly.

이러한 문제의 경우 엘리트 프로그래머라도 처음부터 솔루션을 코딩하는 데 어려움을 겪습니다. 이유는 다양할 수 있습니다. 때로는 우리가 찾고 있는 프로그램이 시간이 지남에 따라 변하는 패턴을 따르기 때문에 정해진 정답이 없습니다! 이러한 경우 성공적인 솔루션은 변화하는 세계에 우아하게 적응해야 합니다. 때로는 관계(픽셀과 추상 범주 간의 관계)가 너무 복잡하여 수천 또는 수백만 번의 계산이 필요하고 알려지지 않은 원리를 따를 수도 있습니다. 이미지 인식의 경우, 우리의 잠재의식적인 인지 과정이 쉽게 작업을 수행하더라도 작업을 수행하는 데 필요한 정확한 단계는 우리의 의식적인 이해 범위를 벗어납니다.

Machine learning is the study of algorithms that can learn from experience. As a machine learning algorithm accumulates more experience, typically in the form of observational data or interactions with an environment, its performance improves. Contrast this with our deterministic e-commerce platform, which follows the same business logic, no matter how much experience accrues, until the developers themselves learn and decide that it is time to update the software. In this book, we will teach you the fundamentals of machine learning, focusing in particular on deep learning, a powerful set of techniques driving innovations in areas as diverse as computer vision, natural language processing, healthcare, and genomics.

머신러닝은 경험을 통해 학습할 수 있는 알고리즘을 연구하는 학문입니다. 기계 학습 알고리즘은 일반적으로 관찰 데이터 또는 환경과의 상호 작용 형태로 더 많은 경험을 축적함에 따라 성능이 향상됩니다. 아무리 많은 경험이 축적되더라도 개발자가 스스로 학습하여 소프트웨어를 업데이트할 시기라고 결정할 때까지 동일한 비즈니스 논리를 따르는 결정론적 전자 상거래 플랫폼과 비교해 보세요. 이 책에서는 특히 컴퓨터 비전, 자연어 처리, 의료, 유전체학과 같은 다양한 분야에서 혁신을 주도하는 강력한 기술 세트인 딥 러닝에 초점을 맞춰 머신 러닝의 기초를 가르칠 것입니다.

1.1. A Motivating Example

Before beginning writing, the authors of this book, like much of the work force, had to become caffeinated. We hopped in the car and started driving. Using an iPhone, Alex called out “Hey Siri”, awakening the phone’s voice recognition system. Then Mu commanded “directions to Blue Bottle coffee shop”. The phone quickly displayed the transcription of his command. It also recognized that we were asking for directions and launched the Maps application (app) to fulfill our request. Once launched, the Maps app identified a number of routes. Next to each route, the phone displayed a predicted transit time. While this story was fabricated for pedagogical convenience, it demonstrates that in the span of just a few seconds, our everyday interactions with a smart phone can engage several machine learning models.

집필을 시작하기 전에 이 책의 저자들도 대부분의 노동력과 마찬가지로 카페인을 섭취해야 했습니다. 우리는 차에 올라 운전을 시작했습니다. Alex는 iPhone을 사용하여 "Siri야"라고 외치며 휴대폰의 음성 인식 시스템을 활성화했습니다. 그런 다음 Mu는 "블루보틀 커피숍으로 가는 길"을 명령했습니다. 전화기에는 그의 명령 내용이 빠르게 표시되었습니다. 또한 우리가 길을 묻는다는 사실을 인식하고 요청을 이행하기 위해 지도 애플리케이션(앱)을 시작했습니다. 지도 앱이 시작되면 여러 경로를 식별했습니다. 각 경로 옆에는 예상 대중교통 시간이 표시됩니다. 이 이야기는 교육적 편의를 위해 제작되었지만 단 몇 초 만에 스마트폰과의 일상적인 상호 작용이 여러 기계 학습 모델에 참여할 수 있음을 보여줍니다.

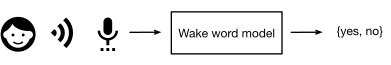

Imagine just writing a program to respond to a wake word such as “Alexa”, “OK Google”, and “Hey Siri”. Try coding it up in a room by yourself with nothing but a computer and a code editor, as illustrated in Fig. 1.1.1. How would you write such a program from first principles? Think about it… the problem is hard. Every second, the microphone will collect roughly 44,000 samples. Each sample is a measurement of the amplitude of the sound wave. What rule could map reliably from a snippet of raw audio to confident predictions {yes,no} about whether the snippet contains the wake word? If you are stuck, do not worry. We do not know how to write such a program from scratch either. That is why we use machine learning.

"Alexa", "OK Google", "Hey Siri"와 같은 깨우기 단어에 응답하는 프로그램을 작성한다고 상상해 보십시오. 그림 1.1.1에 설명된 것처럼 컴퓨터와 코드 편집기만 사용하여 방에서 직접 코딩해 보세요. 첫 번째 원칙에 따라 그러한 프로그램을 어떻게 작성하시겠습니까? 생각해보세요… 문제는 어렵습니다. 매초마다 마이크는 대략 44,000개의 샘플을 수집합니다. 각 샘플은 음파의 진폭을 측정한 것입니다. 원시 오디오 조각에서 해당 조각에 깨우기 단어가 포함되어 있는지에 대한 확실한 예측으로 안정적으로 매핑할 수 있는 규칙은 무엇입니까? 막히더라도 걱정하지 마세요. 우리는 그러한 프로그램을 처음부터 작성하는 방법도 모릅니다. 이것이 바로 우리가 머신러닝을 사용하는 이유입니다.

Here is the trick. Often, even when we do not know how to tell a computer explicitly how to map from inputs to outputs, we are nonetheless capable of performing the cognitive feat ourselves. In other words, even if you do not know how to program a computer to recognize the word “Alexa”, you yourself are able to recognize it. Armed with this ability, we can collect a huge dataset containing examples of audio snippets and associated labels, indicating which snippets contain the wake word. In the currently dominant approach to machine learning, we do not attempt to design a system explicitly to recognize wake words. Instead, we define a flexible program whose behavior is determined by a number of parameters. Then we use the dataset to determine the best possible parameter values, i.e., those that improve the performance of our program with respect to a chosen performance measure.

여기에 트릭이 있습니다. 입력에서 출력으로 매핑하는 방법을 컴퓨터에 명시적으로 지시하는 방법을 모르더라도 우리는 인지적 위업을 스스로 수행할 수 있는 경우가 많습니다. 즉, "Alexa"라는 단어를 인식하도록 컴퓨터를 프로그래밍하는 방법을 모르더라도 스스로 인식할 수 있습니다. 이 기능을 사용하면 깨우기 단어가 포함된 조각을 나타내는 오디오 조각 및 관련 레이블의 예가 포함된 거대한 데이터세트를 수집할 수 있습니다. 현재 기계 학습에 대한 지배적인 접근 방식에서는 깨우기 단어를 인식하기 위한 시스템을 명시적으로 설계하려고 시도하지 않습니다. 대신, 우리는 여러 매개변수에 의해 동작이 결정되는 유연한 프로그램을 정의합니다. 그런 다음 데이터 세트를 사용하여 가능한 최상의 매개변수 값, 즉 선택한 성능 측정과 관련하여 프로그램 성능을 향상시키는 값을 결정합니다.

You can think of the parameters as knobs that we can turn, manipulating the behavior of the program. Once the parameters are fixed, we call the program a model. The set of all distinct programs (input–output mappings) that we can produce just by manipulating the parameters is called a family of models. And the “meta-program” that uses our dataset to choose the parameters is called a learning algorithm.

매개변수는 프로그램의 동작을 조작하면서 돌릴 수 있는 손잡이로 생각할 수 있습니다. 매개변수가 고정되면 프로그램을 모델이라고 부릅니다. 매개변수를 조작하는 것만으로 생성할 수 있는 모든 개별 프로그램(입력-출력 매핑) 세트를 모델 계열이라고 합니다. 그리고 데이터 세트를 사용하여 매개변수를 선택하는 "메타 프로그램"을 학습 알고리즘이라고 합니다.

Before we can go ahead and engage the learning algorithm, we have to define the problem precisely, pinning down the exact nature of the inputs and outputs, and choosing an appropriate model family. In this case, our model receives a snippet of audio as input, and the model generates a selection among {yes,no} as output. If all goes according to plan the model’s guesses will typically be correct as to whether the snippet contains the wake word.

학습 알고리즘을 시작하기 전에 문제를 정확하게 정의하고, 입력과 출력의 정확한 특성을 파악하고, 적절한 모델 계열을 선택해야 합니다. 이 경우 모델은 오디오 조각을 입력으로 수신하고 모델은 출력으로 오디오 조각 중에서 선택 항목을 생성합니다. 모든 것이 계획대로 진행되면 조각에 깨우기 단어가 포함되어 있는지 여부에 대한 모델의 추측이 일반적으로 정확합니다.

If we choose the right family of models, there should exist one setting of the knobs such that the model fires “yes” every time it hears the word “Alexa”. Because the exact choice of the wake word is arbitrary, we will probably need a model family sufficiently rich that, via another setting of the knobs, it could fire “yes” only upon hearing the word “Apricot”. We expect that the same model family should be suitable for “Alexa” recognition and “Apricot” recognition because they seem, intuitively, to be similar tasks. However, we might need a different family of models entirely if we want to deal with fundamentally different inputs or outputs, say if we wanted to map from images to captions, or from English sentences to Chinese sentences.

올바른 모델 제품군을 선택하면 모델이 "Alexa"라는 단어를 들을 때마다 "예"를 실행하도록 손잡이에 대한 하나의 설정이 있어야 합니다. 깨우기 단어의 정확한 선택은 임의적이기 때문에 노브의 다른 설정을 통해 "살구"라는 단어를 들을 때만 "예"를 실행할 수 있을 만큼 충분히 풍부한 모델 제품군이 필요할 것입니다. 우리는 동일한 모델 패밀리가 "Alexa" 인식과 "Apricot" 인식에 적합할 것으로 예상합니다. 왜냐하면 직관적으로 유사한 작업으로 보이기 때문입니다. 그러나 근본적으로 다른 입력이나 출력을 처리하려면, 예를 들어 이미지를 캡션으로 매핑하거나 영어 문장을 중국어 문장으로 매핑하려는 경우 완전히 다른 모델 계열이 필요할 수 있습니다.

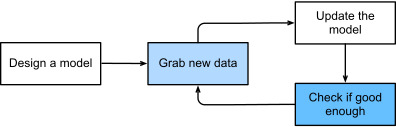

As you might guess, if we just set all of the knobs randomly, it is unlikely that our model will recognize “Alexa”, “Apricot”, or any other English word. In machine learning, the learning is the process by which we discover the right setting of the knobs for coercing the desired behavior from our model. In other words, we train our model with data. As shown in Fig. 1.1.2, the training process usually looks like the following:

짐작할 수 있듯이 모든 손잡이를 무작위로 설정하면 모델이 "Alexa", "Apricot" 또는 기타 영어 단어를 인식할 가능성이 거의 없습니다. 기계 학습에서 학습은 모델에서 원하는 동작을 강제하기 위한 올바른 설정을 찾는 프로세스입니다. 즉, 데이터를 사용하여 모델을 훈련합니다. 그림 1.1.2에서 볼 수 있듯이 훈련 과정은 일반적으로 다음과 같습니다.

- Start off with a randomly initialized model that cannot do anything useful.

아무 것도 유용한 일을 할 수 없는 무작위로 초기화된 모델로 시작하세요. - Grab some of your data (e.g., audio snippets and corresponding {yes,no} labels).

일부 데이터(예: 오디오 조각 및 해당 라벨)를 가져옵니다. - Tweak the knobs to make the model perform better as assessed on those examples.

이러한 예에서 평가된 대로 모델이 더 나은 성능을 발휘하도록 손잡이를 조정하세요. - Repeat Steps 2 and 3 until the model is awesome.

모델이 멋지게 나올 때까지 2단계와 3단계를 반복합니다.

To summarize, rather than code up a wake word recognizer, we code up a program that can learn to recognize wake words, if presented with a large labeled dataset. You can think of this act of determining a program’s behavior by presenting it with a dataset as programming with data. That is to say, we can “program” a cat detector by providing our machine learning system with many examples of cats and dogs. This way the detector will eventually learn to emit a very large positive number if it is a cat, a very large negative number if it is a dog, and something closer to zero if it is not sure. This barely scratches the surface of what machine learning can do. Deep learning, which we will explain in greater detail later, is just one among many popular methods for solving machine learning problems.

요약하면 깨우기 단어 인식기를 코딩하는 대신 대규모 레이블이 지정된 데이터 세트가 제공되는 경우 깨우기 단어를 인식하는 방법을 학습할 수 있는 프로그램을 코딩합니다. 데이터를 사용하여 프로그래밍하는 것처럼 데이터세트를 제공하여 프로그램의 동작을 결정하는 이러한 행위를 생각할 수 있습니다. 즉, 우리는 기계 학습 시스템에 고양이와 개의 많은 예를 제공함으로써 고양이 탐지기를 "프로그래밍"할 수 있습니다. 이런 식으로 탐지기는 고양이인 경우 매우 큰 양수를 방출하고, 개인 경우 매우 큰 음수를 방출하고, 확실하지 않은 경우 0에 가까운 것을 방출하는 방법을 결국 학습하게 됩니다. 이는 머신러닝이 할 수 있는 일의 표면적인 부분에 불과합니다. 나중에 더 자세히 설명할 딥 러닝은 머신 러닝 문제를 해결하는 데 널리 사용되는 방법 중 하나일 뿐입니다.

1.2. Key Components

In our wake word example, we described a dataset consisting of audio snippets and binary labels, and we gave a hand-wavy sense of how we might train a model to approximate a mapping from snippets to classifications. This sort of problem, where we try to predict a designated unknown label based on known inputs given a dataset consisting of examples for which the labels are known, is called supervised learning. This is just one among many kinds of machine learning problems. Before we explore other varieties, we would like to shed more light on some core components that will follow us around, no matter what kind of machine learning problem we tackle:

깨우기 단어 예에서 우리는 오디오 조각과 이진 레이블로 구성된 데이터 세트를 설명했으며 조각에서 분류까지의 매핑을 근사화하기 위해 모델을 훈련하는 방법에 대해 손으로 설명했습니다. 레이블이 알려진 예제로 구성된 데이터 세트가 주어지면 알려진 입력을 기반으로 지정된 알려지지 않은 레이블을 예측하려고 하는 이러한 종류의 문제를 지도 학습이라고 합니다. 이는 다양한 종류의 머신러닝 문제 중 하나일 뿐입니다. 다른 변형을 탐색하기 전에 우리가 다루는 기계 학습 문제의 종류에 관계없이 우리를 따라갈 몇 가지 핵심 구성 요소에 대해 더 많은 정보를 제공하고 싶습니다.

- The data that we can learn from.

우리가 배울 수 있는 데이터입니다. - A model of how to transform the data.

데이터를 변환하는 방법에 대한 모델입니다. - An objective function that quantifies how well (or badly) the model is doing.

모델이 얼마나 잘 수행되는지(또는 나쁘게)를 정량화하는 목적 함수입니다. - An algorithm to adjust the model’s parameters to optimize the objective function.

목적 함수를 최적화하기 위해 모델의 매개변수를 조정하는 알고리즘입니다.

1.2.1. Data

It might go without saying that you cannot do data science without data. We could lose hundreds of pages pondering what precisely data is, but for now, we will focus on the key properties of the datasets that we will be concerned with. Generally, we are concerned with a collection of examples. In order to work with data usefully, we typically need to come up with a suitable numerical representation. Each example (or data point, data instance, sample) typically consists of a set of attributes called features (sometimes called covariates or inputs), based on which the model must make its predictions. In supervised learning problems, our goal is to predict the value of a special attribute, called the label (or target), that is not part of the model’s input.

데이터 없이는 데이터 과학을 할 수 없다는 것은 말할 필요도 없습니다. 데이터가 정확히 무엇인지 고민하다 수백 페이지를 잃을 수도 있지만, 지금은 우리가 관심을 가질 데이터세트의 주요 속성에 초점을 맞추겠습니다. 일반적으로 우리는 예제 모음에 관심이 있습니다. 데이터를 유용하게 사용하려면 일반적으로 적절한 수치 표현이 필요합니다. 각 예(또는 데이터 포인트, 데이터 인스턴스, 샘플)는 일반적으로 모델이 예측을 수행해야 하는 특성(때때로 공변량 또는 입력이라고도 함)이라는 특성 집합으로 구성됩니다. 지도 학습 문제에서 우리의 목표는 모델 입력의 일부가 아닌 레이블(또는 대상)이라는 특수 속성의 값을 예측하는 것입니다.

If we were working with image data, each example might consist of an individual photograph (the features) and a number indicating the category to which the photograph belongs (the label). The photograph would be represented numerically as three grids of numerical values representing the brightness of red, green, and blue light at each pixel location. For example, a 200×200 pixel color photograph would consist of 200×200×3=120000 numerical values.

이미지 데이터로 작업하는 경우 각 예는 개별 사진(특징)과 사진이 속한 범주를 나타내는 숫자(레이블)로 구성될 수 있습니다. 사진은 각 픽셀 위치의 빨간색, 녹색, 파란색 빛의 밝기를 나타내는 숫자 값의 3개 그리드로 숫자로 표시됩니다. 예를 들어 픽셀 컬러 사진은 숫자 값으로 구성됩니다.

Alternatively, we might work with electronic health record data and tackle the task of predicting the likelihood that a given patient will survive the next 30 days. Here, our features might consist of a collection of readily available attributes and frequently recorded measurements, including age, vital signs, comorbidities, current medications, and recent procedures. The label available for training would be a binary value indicating whether each patient in the historical data survived within the 30-day window.

또는 전자 건강 기록 데이터를 사용하여 특정 환자가 향후 30일 동안 생존할 가능성을 예측하는 작업을 수행할 수도 있습니다. 여기에서 우리의 기능은 연령, 활력 징후, 동반 질환, 현재 약물 치료 및 최근 절차를 포함하여 쉽게 사용할 수 있는 속성과 자주 기록되는 측정값 모음으로 구성될 수 있습니다. 훈련에 사용할 수 있는 레이블은 기록 데이터의 각 환자가 30일 기간 내에 생존했는지 여부를 나타내는 이진 값입니다.

In such cases, when every example is characterized by the same number of numerical features, we say that the inputs are fixed-length vectors and we call the (constant) length of the vectors the dimensionality of the data. As you might imagine, fixed-length inputs can be convenient, giving us one less complication to worry about. However, not all data can easily be represented as fixed-length vectors. While we might expect microscope images to come from standard equipment, we cannot expect images mined from the Internet all to have the same resolution or shape. For images, we might consider cropping them to a standard size, but that strategy only gets us so far. We risk losing information in the cropped-out portions. Moreover, text data resists fixed-length representations even more stubbornly. Consider the customer reviews left on e-commerce sites such as Amazon, IMDb, and TripAdvisor. Some are short: “it stinks!”. Others ramble for pages. One major advantage of deep learning over traditional methods is the comparative grace with which modern models can handle varying-length data.

이러한 경우 모든 예제가 동일한 수의 수치 특징으로 특징지어지면 입력이 고정 길이 벡터라고 말하고 벡터의 (일정한) 길이를 데이터의 차원이라고 부릅니다. 여러분이 상상할 수 있듯이 고정 길이 입력은 편리할 수 있으므로 걱정할 복잡성이 하나 줄어듭니다. 그러나 모든 데이터가 고정 길이 벡터로 쉽게 표현될 수 있는 것은 아닙니다. 현미경 이미지가 표준 장비에서 나올 것이라고 기대할 수는 있지만 인터넷에서 채굴한 이미지가 모두 동일한 해상도나 모양을 가질 것이라고 기대할 수는 없습니다. 이미지의 경우 표준 크기로 자르는 것을 고려할 수 있지만 이러한 전략은 지금까지만 가능합니다. 잘린 부분에서는 정보가 손실될 위험이 있습니다. 더욱이 텍스트 데이터는 고정 길이 표현에 더욱 완고하게 저항합니다. Amazon, IMDb, TripAdvisor와 같은 전자상거래 사이트에 남겨진 고객 리뷰를 생각해 보세요. 일부는 짧습니다: “냄새가 나요!”. 다른 사람들은 페이지를 뒤적입니다. 기존 방법에 비해 딥 러닝이 갖는 주요 장점 중 하나는 현대 모델이 다양한 길이의 데이터를 처리할 수 있는 비교 우위입니다.

Generally, the more data we have, the easier our job becomes. When we have more data, we can train more powerful models and rely less heavily on preconceived assumptions. The regime change from (comparatively) small to big data is a major contributor to the success of modern deep learning. To drive the point home, many of the most exciting models in deep learning do not work without large datasets. Some others might work in the small data regime, but are no better than traditional approaches.

일반적으로 데이터가 많을수록 작업이 더 쉬워집니다. 더 많은 데이터가 있으면 더 강력한 모델을 훈련할 수 있고 선입견에 덜 의존할 수 있습니다. (비교적) 작은 데이터에서 빅 데이터로의 체제 변화는 현대 딥 러닝의 성공에 주요한 기여를 했습니다. 요점을 말하자면, 딥 러닝에서 가장 흥미로운 모델 중 다수는 대규모 데이터 세트 없이는 작동하지 않습니다. 다른 일부는 소규모 데이터 체제에서 작동할 수 있지만 기존 접근 방식보다 낫지 않습니다.

Finally, it is not enough to have lots of data and to process it cleverly. We need the right data. If the data is full of mistakes, or if the chosen features are not predictive of the target quantity of interest, learning is going to fail. The situation is captured well by the cliché: garbage in, garbage out. Moreover, poor predictive performance is not the only potential consequence. In sensitive applications of machine learning, like predictive policing, resume screening, and risk models used for lending, we must be especially alert to the consequences of garbage data. One commonly occurring failure mode concerns datasets where some groups of people are unrepresented in the training data. Imagine applying a skin cancer recognition system that had never seen black skin before. Failure can also occur when the data does not only under-represent some groups but reflects societal prejudices. For example, if past hiring decisions are used to train a predictive model that will be used to screen resumes then machine learning models could inadvertently capture and automate historical injustices. Note that this can all happen without the data scientist actively conspiring, or even being aware.

마지막으로, 많은 양의 데이터를 보유하고 이를 영리하게 처리하는 것만으로는 충분하지 않습니다. 우리에게는 올바른 데이터가 필요합니다. 데이터가 실수로 가득 차 있거나 선택한 특징이 관심 대상 수량을 예측하지 못하는 경우 학습은 실패할 것입니다. 상황은 진부한 표현으로 잘 포착되었습니다. 쓰레기는 들어오고 쓰레기는 나갑니다. 더욱이, 낮은 예측 성능이 유일한 잠재적인 결과는 아닙니다. 예측 치안 관리, 이력서 심사, 대출에 사용되는 위험 모델과 같은 민감한 기계 학습 애플리케이션에서는 쓰레기 데이터의 결과에 특히 주의해야 합니다. 일반적으로 발생하는 실패 모드 중 하나는 일부 그룹의 사람들이 훈련 데이터에 나타나지 않는 데이터 세트와 관련이 있습니다. 이전에 검은 피부를 본 적이 없는 피부암 인식 시스템을 적용한다고 상상해보세요. 데이터가 일부 집단을 과소 대표할 뿐만 아니라 사회적 편견을 반영하는 경우에도 실패가 발생할 수 있습니다. 예를 들어 과거 채용 결정을 사용하여 이력서를 선별하는 데 사용할 예측 모델을 교육하는 경우 기계 학습 모델이 과거의 불의를 실수로 포착하고 자동화할 수 있습니다. 이 모든 일은 데이터 과학자가 적극적으로 공모하거나 인지하지 않고도 일어날 수 있습니다.

1.2.2. Models

Most machine learning involves transforming the data in some sense. We might want to build a system that ingests photos and predicts smiley-ness. Alternatively, we might want to ingest a set of sensor readings and predict how normal vs. anomalous the readings are. By model, we denote the computational machinery for ingesting data of one type, and spitting out predictions of a possibly different type. In particular, we are interested in statistical models that can be estimated from data. While simple models are perfectly capable of addressing appropriately simple problems, the problems that we focus on in this book stretch the limits of classical methods. Deep learning is differentiated from classical approaches principally by the set of powerful models that it focuses on. These models consist of many successive transformations of the data that are chained together top to bottom, thus the name deep learning. On our way to discussing deep models, we will also discuss some more traditional methods.

대부분의 기계 학습에는 어떤 의미에서 데이터 변환이 포함됩니다. 우리는 사진을 수집하고 웃는 모습을 예측하는 시스템을 구축하고 싶을 수도 있습니다. 또는 일련의 센서 판독값을 수집하고 판독값이 얼마나 정상인지 비정상인지 예측할 수도 있습니다. 모델이란 한 가지 유형의 데이터를 수집하고 다른 유형의 예측을 내놓는 계산 기계를 나타냅니다. 특히, 데이터로부터 추정할 수 있는 통계모델에 관심이 있습니다. 간단한 모델은 적절하게 간단한 문제를 완벽하게 해결할 수 있지만, 이 책에서 초점을 맞추는 문제는 고전적인 방법의 한계를 확장합니다. 딥 러닝은 주로 초점을 맞춘 강력한 모델 세트로 인해 기존 접근 방식과 차별화됩니다. 이러한 모델은 위에서 아래로 연결되는 수많은 연속적인 데이터 변환으로 구성되므로 딥러닝이라는 이름이 붙습니다. 심층 모델을 논의하는 도중에 좀 더 전통적인 방법도 논의할 것입니다.

1.2.3. Objective Functions

Earlier, we introduced machine learning as learning from experience. By learning here, we mean improving at some task over time. But who is to say what constitutes an improvement? You might imagine that we could propose updating our model, and some people might disagree on whether our proposal constituted an improvement or not.

앞서 우리는 경험을 통해 학습하는 머신러닝을 소개했습니다. 여기서 학습한다는 것은 시간이 지남에 따라 일부 작업이 향상된다는 의미입니다. 그러나 개선이 무엇인지 누가 말할 수 있습니까? 우리가 모델 업데이트를 제안할 수 있다고 상상할 수도 있고 일부 사람들은 우리 제안이 개선을 구성하는지 여부에 동의하지 않을 수도 있습니다.

In order to develop a formal mathematical system of learning machines, we need to have formal measures of how good (or bad) our models are. In machine learning, and optimization more generally, we call these objective functions. By convention, we usually define objective functions so that lower is better. This is merely a convention. You can take any function for which higher is better, and turn it into a new function that is qualitatively identical but for which lower is better by flipping the sign. Because we choose lower to be better, these functions are sometimes called loss functions.

학습 기계의 공식적인 수학적 시스템을 개발하려면 모델이 얼마나 좋은지(또는 나쁜지)에 대한 공식적인 척도가 필요합니다. 기계 학습 및 보다 일반적으로 최적화에서는 이러한 목적 함수를 호출합니다. 관례적으로 우리는 일반적으로 낮은 것이 더 좋도록 목적 함수를 정의합니다. 이것은 단지 관례일 뿐입니다. 높을수록 더 좋은 함수를 가져와서 부호를 뒤집어서 질적으로는 동일하지만 낮을수록 더 나은 새로운 함수로 바꿀 수 있습니다. 더 나은 것을 선택하기 위해 더 낮은 것을 선택하기 때문에 이러한 함수를 손실 함수라고 부르기도 합니다.

When trying to predict numerical values, the most common loss function is squared error, i.e., the square of the difference between the prediction and the ground truth target. For classification, the most common objective is to minimize error rate, i.e., the fraction of examples on which our predictions disagree with the ground truth. Some objectives (e.g., squared error) are easy to optimize, while others (e.g., error rate) are difficult to optimize directly, owing to non-differentiability or other complications. In these cases, it is common instead to optimize a surrogate objective.

숫자 값을 예측하려고 할 때 가장 일반적인 손실 함수는 오차 제곱, 즉 예측과 실제 목표 간 차이의 제곱입니다. 분류의 경우 가장 일반적인 목표는 오류율, 즉 예측이 실제와 일치하지 않는 사례의 비율을 최소화하는 것입니다. 일부 목표(예: 제곱 오류)는 최적화하기 쉬운 반면, 다른 목표(예: 오류율)는 미분성 또는 기타 복잡성으로 인해 직접 최적화하기 어렵습니다. 이러한 경우 대리 목표를 최적화하는 것이 일반적입니다.

During optimization, we think of the loss as a function of the model’s parameters, and treat the training dataset as a constant. We learn the best values of our model’s parameters by minimizing the loss incurred on a set consisting of some number of examples collected for training. However, doing well on the training data does not guarantee that we will do well on unseen data. So we will typically want to split the available data into two partitions: the training dataset (or training set), for learning model parameters; and the test dataset (or test set), which is held out for evaluation. At the end of the day, we typically report how our models perform on both partitions. You could think of training performance as analogous to the scores that a student achieves on the practice exams used to prepare for some real final exam. Even if the results are encouraging, that does not guarantee success on the final exam. Over the course of studying, the student might begin to memorize the practice questions, appearing to master the topic but faltering when faced with previously unseen questions on the actual final exam. When a model performs well on the training set but fails to generalize to unseen data, we say that it is overfitting to the training data.

최적화 중에 손실을 모델 매개변수의 함수로 생각하고 훈련 데이터세트를 상수로 취급합니다. 훈련을 위해 수집된 몇 가지 예제로 구성된 세트에서 발생하는 손실을 최소화하여 모델 매개변수의 최상의 값을 학습합니다. 그러나 훈련 데이터를 잘 처리한다고 해서 보이지 않는 데이터도 잘 처리할 것이라는 보장은 없습니다. 따라서 우리는 일반적으로 사용 가능한 데이터를 두 개의 파티션으로 분할하려고 합니다. 즉, 모델 매개변수 학습을 위한 훈련 데이터세트(또는 훈련 세트); 그리고 평가를 위해 보류된 테스트 데이터 세트(또는 테스트 세트)입니다. 결국 우리는 일반적으로 모델이 두 파티션 모두에서 어떻게 수행되는지 보고합니다. 훈련 성과는 학생이 실제 최종 시험을 준비하는 데 사용되는 연습 시험에서 획득하는 점수와 유사하다고 생각할 수 있습니다. 비록 결과가 고무적이라고 하더라도 그것이 최종 시험에서의 성공을 보장하지는 않습니다. 공부하는 동안 학생은 연습 문제를 암기하기 시작하고 주제를 마스터한 것처럼 보이지만 실제 최종 시험에서 이전에 볼 수 없었던 문제에 직면하면 머뭇거릴 수도 있습니다. 모델이 훈련 세트에서는 잘 수행되지만 보이지 않는 데이터에 대한 일반화에 실패하면 훈련 데이터에 과적합되었다고 말합니다.

1.2.4. Optimization Algorithms

Once we have got some data source and representation, a model, and a well-defined objective function, we need an algorithm capable of searching for the best possible parameters for minimizing the loss function. Popular optimization algorithms for deep learning are based on an approach called gradient descent. In brief, at each step, this method checks to see, for each parameter, how that training set loss would change if you perturbed that parameter by just a small amount. It would then update the parameter in the direction that lowers the loss.

데이터 소스와 표현, 모델, 잘 정의된 목적 함수를 확보한 후에는 손실 함수를 최소화하기 위한 최상의 매개변수를 검색할 수 있는 알고리즘이 필요합니다. 딥러닝에 널리 사용되는 최적화 알고리즘은 경사하강법이라는 접근 방식을 기반으로 합니다. 간단히 말해서, 각 단계에서 이 방법은 각 매개변수에 대해 해당 매개변수를 조금만 교란할 경우 훈련 세트 손실이 어떻게 변하는지 확인합니다. 그런 다음 손실을 낮추는 방향으로 매개변수를 업데이트합니다.

1.3. Kinds of Machine Learning Problems

The wake word problem in our motivating example is just one among many that machine learning can tackle. To motivate the reader further and provide us with some common language that will follow us throughout the book, we now provide a broad overview of the landscape of machine learning problems.

동기를 부여하는 예시의 깨우기 단어 문제는 머신러닝이 해결할 수 있는 많은 문제 중 하나일 뿐입니다. 독자에게 더욱 동기를 부여하고 책 전반에 걸쳐 우리를 따라갈 몇 가지 공통 언어를 제공하기 위해 이제 기계 학습 문제의 환경에 대한 광범위한 개요를 제공합니다.

1.3.1. Supervised Learning

Supervised learning describes tasks where we are given a dataset containing both features and labels and asked to produce a model that predicts the labels when given input features. Each feature–label pair is called an example. Sometimes, when the context is clear, we may use the term examples to refer to a collection of inputs, even when the corresponding labels are unknown. The supervision comes into play because, for choosing the parameters, we (the supervisors) provide the model with a dataset consisting of labeled examples. In probabilistic terms, we typically are interested in estimating the conditional probability of a label given input features. While it is just one among several paradigms, supervised learning accounts for the majority of successful applications of machine learning in industry. Partly that is because many important tasks can be described crisply as estimating the probability of something unknown given a particular set of available data:

지도 학습은 특성과 레이블을 모두 포함하는 데이터 세트가 주어지고 입력 특성이 주어졌을 때 레이블을 예측하는 모델을 생성하도록 요청받는 작업을 설명합니다. 각 기능-레이블 쌍을 예시라고 합니다. 때로는 맥락이 명확할 때 해당 레이블을 알 수 없는 경우에도 입력 모음을 참조하기 위해 예제라는 용어를 사용할 수 있습니다. 매개변수를 선택하기 위해 우리(감독자)는 레이블이 지정된 예제로 구성된 데이터 세트를 모델에 제공하기 때문에 감독이 작동합니다. 확률론적 측면에서 우리는 일반적으로 입력 특징이 주어진 라벨의 조건부 확률을 추정하는 데 관심이 있습니다. 지도 학습은 여러 패러다임 중 하나일 뿐이지만 업계에서 기계 학습을 성공적으로 적용한 사례의 대부분을 차지합니다. 부분적으로는 많은 중요한 작업이 특정 사용 가능한 데이터 세트를 바탕으로 알려지지 않은 무언가의 확률을 추정하는 것으로 명확하게 설명될 수 있기 때문입니다.

- Predict cancer vs. not cancer, given a computer tomography image.

- 컴퓨터 단층촬영 이미지를 바탕으로 암과 암이 아닌 것을 예측해 보세요.

- Predict the correct translation in French, given a sentence in English.

- 영어로 주어진 문장에 대해 프랑스어로 올바른 번역을 예측합니다.

- Predict the price of a stock next month based on this month’s financial reporting data.

- 이번 달 재무 보고 데이터를 바탕으로 다음 달 주식 가격을 예측해 보세요.

While all supervised learning problems are captured by the simple description “predicting the labels given input features”, supervised learning itself can take diverse forms and require tons of modeling decisions, depending on (among other considerations) the type, size, and quantity of the inputs and outputs. For example, we use different models for processing sequences of arbitrary lengths and fixed-length vector representations. We will visit many of these problems in depth throughout this book.

모든 지도 학습 문제는 "입력 특성에 따른 레이블 예측"이라는 간단한 설명으로 포착되지만, 지도 학습 자체는 다양한 형태를 취할 수 있으며 (다른 고려 사항 중에서) 유형, 크기 및 수량에 따라 수많은 모델링 결정이 필요할 수 있습니다. 입력 및 출력. 예를 들어, 임의 길이의 시퀀스와 고정 길이 벡터 표현을 처리하기 위해 다양한 모델을 사용합니다. 우리는 이 책 전반에 걸쳐 이러한 많은 문제들을 심층적으로 다룰 것입니다.

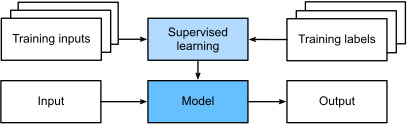

Informally, the learning process looks something like the following. First, grab a big collection of examples for which the features are known and select from them a random subset, acquiring the ground truth labels for each. Sometimes these labels might be available data that have already been collected (e.g., did a patient die within the following year?) and other times we might need to employ human annotators to label the data, (e.g., assigning images to categories). Together, these inputs and corresponding labels comprise the training set. We feed the training dataset into a supervised learning algorithm, a function that takes as input a dataset and outputs another function: the learned model. Finally, we can feed previously unseen inputs to the learned model, using its outputs as predictions of the corresponding label. The full process is drawn in Fig. 1.3.1.

비공식적으로 학습 과정은 다음과 같습니다. 먼저, 기능이 알려진 대규모 예제 컬렉션을 수집하고 그 중에서 무작위 하위 집합을 선택하여 각각에 대한 실측 레이블을 획득합니다. 때로는 이러한 레이블이 이미 수집된 데이터일 수도 있고(예: 환자가 다음 해에 사망했습니까?) 다른 경우에는 인간 주석자를 고용하여 데이터에 레이블을 지정해야 할 수도 있습니다(예: 이미지를 카테고리에 할당). 이러한 입력과 해당 레이블이 함께 훈련 세트를 구성합니다. 훈련 데이터 세트를 지도 학습 알고리즘에 입력합니다. 이 알고리즘은 데이터 세트를 입력으로 받아 또 다른 기능인 학습된 모델을 출력합니다. 마지막으로, 출력을 해당 라벨의 예측으로 사용하여 이전에 볼 수 없었던 입력을 학습된 모델에 공급할 수 있습니다. 전체 과정은 그림 1.3.1에 그려져 있다.

1.3.1.1. Regression

Perhaps the simplest supervised learning task to wrap your head around is regression. Consider, for example, a set of data harvested from a database of home sales. We might construct a table, in which each row corresponds to a different house, and each column corresponds to some relevant attribute, such as the square footage of a house, the number of bedrooms, the number of bathrooms, and the number of minutes (walking) to the center of town. In this dataset, each example would be a specific house, and the corresponding feature vector would be one row in the table. If you live in New York or San Francisco, and you are not the CEO of Amazon, Google, Microsoft, or Facebook, the (sq. footage, no. of bedrooms, no. of bathrooms, walking distance) feature vector for your home might look something like: [600,1,1,60]. However, if you live in Pittsburgh, it might look more like [3000,4,3,10]. Fixed-length feature vectors like this are essential for most classic machine learning algorithms.

아마도 가장 간단한 지도 학습 작업은 회귀일 것입니다. 예를 들어, 주택 판매 데이터베이스에서 수집된 데이터 세트를 생각해 보세요. 각 행은 서로 다른 집에 해당하고 각 열은 집의 면적, 침실 수, 욕실 수 및 분 수와 같은 일부 관련 속성에 해당하는 테이블을 구성할 수 있습니다( 도보) 시내 중심까지. 이 데이터 세트에서 각 예는 특정 주택이고 해당 특징 벡터는 테이블의 한 행이 됩니다. 귀하가 뉴욕이나 샌프란시스코에 거주하고 Amazon, Google, Microsoft 또는 Facebook의 CEO가 아닌 경우 귀하의 집에 대한 (평방피트, 침실 수, 욕실 수, 도보 거리) 특징 벡터 다음과 같이 보일 수 있습니다: [600,1,1,60]. 그러나 피츠버그에 거주하는 경우에는 [3000,4,3,10]과 유사하게 보일 수 있습니다. 이와 같은 고정 길이 특징 벡터는 대부분의 고전적인 기계 학습 알고리즘에 필수적입니다.

What makes a problem a regression is actually the form of the target. Say that you are in the market for a new home. You might want to estimate the fair market value of a house, given some features such as above. The data here might consist of historical home listings and the labels might be the observed sales prices. When labels take on arbitrary numerical values (even within some interval), we call this a regression problem. The goal is to produce a model whose predictions closely approximate the actual label values.

문제를 회귀로 만드는 것은 실제로 대상의 형태입니다. 당신이 새 집을 구하려고 시장에 있다고 가정해 보세요. 위와 같은 일부 기능을 고려하여 주택의 공정한 시장 가치를 추정할 수 있습니다. 여기의 데이터는 과거 주택 목록으로 구성될 수 있으며 레이블은 관찰된 판매 가격일 수 있습니다. 레이블이 임의의 숫자 값을 취하는 경우(특정 간격 내에서도) 이를 회귀 문제라고 합니다. 목표는 예측이 실제 레이블 값과 매우 유사한 모델을 생성하는 것입니다.

Lots of practical problems are easily described as regression problems. Predicting the rating that a user will assign to a movie can be thought of as a regression problem and if you designed a great algorithm to accomplish this feat in 2009, you might have won the 1-million-dollar Netflix prize. Predicting the length of stay for patients in the hospital is also a regression problem. A good rule of thumb is that any how much? or how many? problem is likely to be regression. For example:

많은 실제 문제는 회귀 문제로 쉽게 설명됩니다. 사용자가 영화에 부여할 등급을 예측하는 것은 회귀 문제로 생각할 수 있으며, 2009년에 이 업적을 달성하기 위한 훌륭한 알고리즘을 설계했다면 백만 달러의 Netflix 상금을 받을 수도 있습니다. 환자의 병원 입원 기간을 예측하는 것도 회귀 문제입니다. 경험상 좋은 법칙은 얼마입니까? 아니면 몇 개? 문제는 회귀일 가능성이 높습니다. 예를 들어:

- How many hours will this surgery take?

- 이 수술은 몇 시간 정도 걸리나요?

- How much rainfall will this town have in the next six hours?

- 앞으로 6시간 동안 이 마을에는 얼마나 많은 비가 내릴까요?

Even if you have never worked with machine learning before, you have probably worked through a regression problem informally. Imagine, for example, that you had your drains repaired and that your contractor spent 3 hours removing gunk from your sewage pipes. Then they sent you a bill of 350 dollars. Now imagine that your friend hired the same contractor for 2 hours and received a bill of 250 dollars. If someone then asked you how much to expect on their upcoming gunk-removal invoice you might make some reasonable assumptions, such as more hours worked costs more dollars. You might also assume that there is some base charge and that the contractor then charges per hour. If these assumptions held true, then given these two data examples, you could already identify the contractor’s pricing structure: 100 dollars per hour plus 50 dollars to show up at your house. If you followed that much, then you already understand the high-level idea behind linear regression.

이전에 기계 학습을 사용해 본 적이 없더라도 아마도 비공식적으로 회귀 문제를 해결해 본 적이 있을 것입니다. 예를 들어, 배수관을 수리했고 계약자가 하수관에서 오물을 제거하는 데 3시간을 소비했다고 상상해 보십시오. 그런 다음 그들은 당신에게 350달러짜리 청구서를 보냈습니다. 이제 당신의 친구가 같은 계약자를 2시간 동안 고용하고 250달러의 청구서를 받았다고 상상해 보십시오. 그런 다음 누군가가 다가오는 오물 제거 청구서에서 얼마를 예상하는지 묻는다면, 더 많은 시간을 일하면 더 많은 비용이 든다는 등 몇 가지 합리적인 가정을 할 수 있습니다. 또한 기본 요금이 있고 계약자가 시간당 요금을 부과한다고 가정할 수도 있습니다. 이러한 가정이 사실이라면 이 두 가지 데이터 예를 통해 계약자의 가격 구조를 이미 식별할 수 있습니다. 즉, 시간당 100달러에 집에 도착하는 데 드는 비용 50달러입니다. 그렇게 많이 따라하셨다면 이미 선형 회귀 뒤에 숨은 고급 개념을 이해하신 것입니다.

In this case, we could produce the parameters that exactly matched the contractor’s prices. Sometimes this is not possible, e.g., if some of the variation arises from factors beyond your two features. In these cases, we will try to learn models that minimize the distance between our predictions and the observed values. In most of our chapters, we will focus on minimizing the squared error loss function. As we will see later, this loss corresponds to the assumption that our data were corrupted by Gaussian noise.

이 경우 계약자의 가격과 정확히 일치하는 매개변수를 생성할 수 있었습니다. 예를 들어 일부 변형이 두 가지 기능 이외의 요인으로 인해 발생하는 경우에는 이것이 불가능할 수도 있습니다. 이러한 경우 예측과 관찰된 값 사이의 거리를 최소화하는 모델을 학습하려고 노력할 것입니다. 대부분의 장에서는 제곱 오류 손실 함수를 최소화하는 데 중점을 둘 것입니다. 나중에 살펴보겠지만, 이 손실은 데이터가 가우스 노이즈로 인해 손상되었다는 가정에 해당합니다.

1.3.1.2. Classification

While regression models are great for addressing how many? questions, lots of problems do not fit comfortably in this template. Consider, for example, a bank that wants to develop a check scanning feature for its mobile app. Ideally, the customer would simply snap a photo of a check and the app would automatically recognize the text from the image. Assuming that we had some ability to segment out image patches corresponding to each handwritten character, then the primary remaining task would be to determine which character among some known set is depicted in each image patch. These kinds of which one? problems are called classification and require a different set of tools from those used for regression, although many techniques will carry over.

회귀 모델은 얼마나 많은 문제를 해결하는 데 유용합니까? 질문, 많은 문제가 이 템플릿에 적합하지 않습니다. 예를 들어, 모바일 앱용 수표 스캔 기능을 개발하려는 은행을 생각해 보십시오. 이상적으로는 고객이 수표 사진을 찍으면 앱이 자동으로 이미지의 텍스트를 인식합니다. 각 손으로 쓴 문자에 해당하는 이미지 패치를 분할할 수 있는 능력이 있다고 가정하면 남은 주요 작업은 알려진 세트 중 어떤 문자가 각 이미지 패치에 표시되는지 결정하는 것입니다. 이런 종류는 어느 것입니까? 문제를 분류라고 하며 회귀에 사용되는 도구와는 다른 도구 세트가 필요하지만 많은 기술이 그대로 적용됩니다.

In classification, we want our model to look at features, e.g., the pixel values in an image, and then predict to which category (sometimes called a class) among some discrete set of options, an example belongs. For handwritten digits, we might have ten classes, corresponding to the digits 0 through 9. The simplest form of classification is when there are only two classes, a problem which we call binary classification. For example, our dataset could consist of images of animals and our labels might be the classes {cat, dog}. Whereas in regression we sought a regressor to output a numerical value, in classification we seek a classifier, whose output is the predicted class assignment.

분류에서 우리는 모델이 특징(예: 이미지의 픽셀 값)을 살펴본 다음 일부 개별 옵션 집합 중 어떤 범주(클래스라고도 함)에 해당 예가 속하는지 예측하기를 원합니다. 손으로 쓴 숫자의 경우 숫자 0부터 9까지에 해당하는 10개의 클래스가 있을 수 있습니다. 분류의 가장 간단한 형태는 클래스가 두 개뿐인 경우인데, 이 문제를 이진 분류라고 합니다. 예를 들어 데이터세트는 동물 이미지로 구성될 수 있고 라벨은 {cat, dog} 클래스일 수 있습니다. 회귀에서는 숫자 값을 출력하기 위해 회귀자를 찾는 반면, 분류에서는 예측된 클래스 할당을 출력하는 분류기를 찾습니다.

For reasons that we will get into as the book gets more technical, it can be difficult to optimize a model that can only output a firm categorical assignment, e.g., either “cat” or “dog”. In these cases, it is usually much easier to express our model in the language of probabilities. Given features of an example, our model assigns a probability to each possible class. Returning to our animal classification example where the classes are {cat, dog}, a classifier might see an image and output the probability that the image is a cat as 0.9. We can interpret this number by saying that the classifier is 90% sure that the image depicts a cat. The magnitude of the probability for the predicted class conveys a notion of uncertainty. It is not the only one available and we will discuss others in chapters dealing with more advanced topics.

책이 좀 더 기술적으로 다루어질수록 "고양이" 또는 "개"와 같은 확고한 범주 할당만 출력할 수 있는 모델을 최적화하는 것은 어려울 수 있습니다. 이러한 경우 일반적으로 모델을 확률의 언어로 표현하는 것이 훨씬 쉽습니다. 예제의 특징이 주어지면 우리 모델은 가능한 각 클래스에 확률을 할당합니다. 클래스가 {cat, dog}인 동물 분류 예제로 돌아가면 분류자는 이미지를 보고 이미지가 고양이일 확률을 0.9로 출력할 수 있습니다. 분류자가 이미지가 고양이를 묘사한다고 90% 확신한다고 말함으로써 이 숫자를 해석할 수 있습니다. 예측 클래스에 대한 확률의 크기는 불확실성의 개념을 전달합니다. 이는 사용 가능한 유일한 것이 아니며 보다 고급 주제를 다루는 장에서 다른 항목에 대해 논의할 것입니다.

When we have more than two possible classes, we call the problem multiclass classification. Common examples include handwritten character recognition {0, 1, 2, ... 9, a, b, c, ...}. While we attacked regression problems by trying to minimize the squared error loss function, the common loss function for classification problems is called cross-entropy, whose name will be demystified when we introduce information theory in later chapters.

가능한 클래스가 2개 이상인 경우 문제를 다중클래스 분류라고 합니다. 일반적인 예로는 필기 문자 인식 {0, 1, 2, ... 9, a, b, c, ...}가 있습니다. 우리는 제곱 오류 손실 함수를 최소화하려고 노력하여 회귀 문제를 공격했지만, 분류 문제에 대한 일반적인 손실 함수는 교차 엔트로피라고 하며, 이후 장에서 정보 이론을 소개할 때 이름이 이해될 것입니다.

Note that the most likely class is not necessarily the one that you are going to use for your decision. Assume that you find a beautiful mushroom in your backyard as shown in Fig. 1.3.2.

가장 가능성이 높은 클래스가 반드시 결정에 사용할 클래스는 아닙니다. 그림 1.3.2와 같이 뒷마당에서 아름다운 버섯을 발견했다고 가정합니다.

Now, assume that you built a classifier and trained it to predict whether a mushroom is poisonous based on a photograph. Say our poison-detection classifier outputs that the probability that Fig. 1.3.2 shows a death cap is 0.2. In other words, the classifier is 80% sure that our mushroom is not a death cap. Still, you would have to be a fool to eat it. That is because the certain benefit of a delicious dinner is not worth a 20% risk of dying from it. In other words, the effect of the uncertain risk outweighs the benefit by far. Thus, in order to make a decision about whether to eat the mushroom, we need to compute the expected detriment associated with each action which depends both on the likely outcomes and the benefits or harms associated with each. In this case, the detriment incurred by eating the mushroom might be 0.2×∞+0.8×0=∞, whereas the loss of discarding it is 0.2×0+0.8×1=0.8. Our caution was justified: as any mycologist would tell us, the mushroom in Fig. 1.3.2 is actually a death cap.

이제 분류기를 구축하고 사진을 기반으로 버섯의 독성 여부를 예측하도록 훈련했다고 가정해 보겠습니다. 독극물 감지 분류기가 그림 1.3.2에서 사망 상한선을 표시할 확률이 0.2라고 출력한다고 가정해 보겠습니다. 즉, 분류자는 우리 버섯이 데스캡이 아니라고 80% 확신합니다. 그래도 그것을 먹으려면 바보가 되어야 할 것이다. 맛있는 저녁 식사의 확실한 이점은 그것으로 인해 사망할 위험 20%만큼 가치가 없기 때문입니다. 즉, 불확실한 위험의 영향이 이익보다 훨씬 큽니다. 따라서 버섯을 먹을지 여부를 결정하려면 가능한 결과와 각 행동과 관련된 이익 또는 해악에 따라 달라지는 각 행동과 관련된 예상 피해를 계산해야 합니다. 이 경우, 버섯을 먹음으로써 발생하는 손해는 0.2×무한대+0.8×0=무한 반면, 버섯을 버리는 손실은 0.2×0+0.8×1=0.8이 됩니다. 우리의 주의는 정당했습니다. 어떤 균류학자가 우리에게 말했듯이 그림 1.3.2의 버섯은 실제로 죽음의 모자입니다.

Classification can get much more complicated than just binary or multiclass classification. For instance, there are some variants of classification addressing hierarchically structured classes. In such cases not all errors are equal—if we must err, we might prefer to misclassify to a related class rather than a distant class. Usually, this is referred to as hierarchical classification. For inspiration, you might think of Linnaeus, who organized fauna in a hierarchy.

분류는 이진 또는 다중 클래스 분류보다 훨씬 더 복잡해질 수 있습니다. 예를 들어, 계층적으로 구조화된 클래스를 다루는 몇 가지 분류 변형이 있습니다. 이러한 경우 모든 오류가 동일하지는 않습니다. 오류가 발생한다면 먼 클래스보다는 관련 클래스로 잘못 분류하는 것을 선호할 수 있습니다. 일반적으로 이를 계층적 분류라고 합니다. 영감을 얻으려면 동물군을 계층 구조로 조직한 린네(Linnaeus)를 생각해 보세요.

In the case of animal classification, it might not be so bad to mistake a poodle for a schnauzer, but our model would pay a huge penalty if it confused a poodle with a dinosaur. Which hierarchy is relevant might depend on how you plan to use the model. For example, rattlesnakes and garter snakes might be close on the phylogenetic tree, but mistaking a rattler for a garter could have fatal consequences.

동물 분류의 경우 푸들을 슈나우저로 착각하는 것은 그리 나쁘지 않을 수 있지만, 푸들을 공룡과 혼동하면 우리 모델은 엄청난 페널티를 지불하게 됩니다. 어떤 계층 구조가 관련되는지는 모델 사용 계획에 따라 달라질 수 있습니다. 예를 들어, 방울뱀과 가터뱀은 계통발생수에서 가까울 수 있지만 방울뱀을 가터 훈장으로 착각하면 치명적인 결과를 초래할 수 있습니다.

1.3.1.3. Tagging

Some classification problems fit neatly into the binary or multiclass classification setups. For example, we could train a normal binary classifier to distinguish cats from dogs. Given the current state of computer vision, we can do this easily, with off-the-shelf tools. Nonetheless, no matter how accurate our model gets, we might find ourselves in trouble when the classifier encounters an image of the Town Musicians of Bremen, a popular German fairy tale featuring four animals (Fig. 1.3.3).

일부 분류 문제는 이진 또는 다중 클래스 분류 설정에 딱 맞습니다. 예를 들어, 고양이와 개를 구별하기 위해 일반 이진 분류기를 훈련시킬 수 있습니다. 컴퓨터 비전의 현재 상태를 고려하면 기성 도구를 사용하여 이 작업을 쉽게 수행할 수 있습니다. 그럼에도 불구하고 모델이 아무리 정확하더라도 분류자가 네 마리의 동물이 등장하는 독일의 유명한 동화인 브레멘의 음악대 이미지를 발견하면 문제가 발생할 수 있습니다(그림 1.3.3).

As you can see, the photo features a cat, a rooster, a dog, and a donkey, with some trees in the background. If we anticipate encountering such images, multiclass classification might not be the right problem formulation. Instead, we might want to give the model the option of saying the image depicts a cat, a dog, a donkey, and a rooster.

보시다시피, 사진에는 나무 몇 그루를 배경으로 고양이, 수탉, 개, 당나귀가 등장합니다. 그러한 이미지가 나타날 것으로 예상된다면 다중 클래스 분류가 올바른 문제 공식화가 아닐 수도 있습니다. 대신, 이미지가 고양이, 개, 당나귀, 수탉을 묘사한다고 말할 수 있는 옵션을 모델에 제공할 수 있습니다.

The problem of learning to predict classes that are not mutually exclusive is called multi-label classification. Auto-tagging problems are typically best described in terms of multi-label classification. Think of the tags people might apply to posts on a technical blog, e.g., “machine learning”, “technology”, “gadgets”, “programming languages”, “Linux”, “cloud computing”, “AWS”. A typical article might have 5–10 tags applied. Typically, tags will exhibit some correlation structure. Posts about “cloud computing” are likely to mention “AWS” and posts about “machine learning” are likely to mention “GPUs”.

상호 배타적이지 않은 클래스를 예측하는 학습 문제를 다중 레이블 분류라고 합니다. 자동 태그 추가 문제는 일반적으로 다중 라벨 분류 측면에서 가장 잘 설명됩니다. 사람들이 기술 블로그의 게시물에 적용할 수 있는 태그(예: "기계 학습", "기술", "가젯", "프로그래밍 언어", "Linux", "클라우드 컴퓨팅", "AWS")를 생각해 보세요. 일반적인 기사에는 5~10개의 태그가 적용될 수 있습니다. 일반적으로 태그는 일부 상관 구조를 나타냅니다. "클라우드 컴퓨팅"에 대한 게시물에서는 "AWS"가 언급될 가능성이 높으며 "머신 러닝"에 대한 게시물에서는 "GPU"가 언급될 가능성이 높습니다.

Sometimes such tagging problems draw on enormous label sets. The National Library of Medicine employs many professional annotators who associate each article to be indexed in PubMed with a set of tags drawn from the Medical Subject Headings (MeSH) ontology, a collection of roughly 28,000 tags. Correctly tagging articles is important because it allows researchers to conduct exhaustive reviews of the literature. This is a time-consuming process and typically there is a one-year lag between archiving and tagging. Machine learning can provide provisional tags until each article has a proper manual review. Indeed, for several years, the BioASQ organization has hosted competitions for this task.

때때로 이러한 태깅 문제는 엄청난 양의 레이블 세트를 필요로 합니다. 국립 의학 도서관(National Library of Medicine)은 PubMed에 색인될 각 기사를 약 28,000개의 태그 모음인 MeSH(Medical Subject Headings) 온톨로지에서 가져온 태그 세트와 연결하는 많은 전문 주석자를 고용합니다. 기사에 올바른 태그를 지정하는 것은 연구자가 문헌을 철저하게 검토할 수 있도록 해주기 때문에 중요합니다. 이는 시간이 많이 걸리는 프로세스이며 일반적으로 보관과 태그 지정 사이에 1년의 시차가 있습니다. 기계 학습은 각 기사가 적절한 수동 검토를 받을 때까지 임시 태그를 제공할 수 있습니다. 실제로 몇 년 동안 BioASQ 조직은 이 작업을 위한 대회를 주최해 왔습니다.

1.3.1.4. Search

In the field of information retrieval, we often impose ranks on sets of items. Take web search for example. The goal is less to determine whether a particular page is relevant for a query, but rather which, among a set of relevant results, should be shown most prominently to a particular user. One way of doing this might be to first assign a score to every element in the set and then to retrieve the top-rated elements. PageRank, the original secret sauce behind the Google search engine, was an early example of such a scoring system. Weirdly, the scoring provided by PageRank did not depend on the actual query. Instead, they relied on a simple relevance filter to identify the set of relevant candidates and then used PageRank to prioritize the more authoritative pages. Nowadays, search engines use machine learning and behavioral models to obtain query-dependent relevance scores. There are entire academic conferences devoted to this subject.

정보 검색 분야에서는 항목 집합에 순위를 부여하는 경우가 많습니다. 예를 들어 웹 검색을 생각해 보세요. 목표는 특정 페이지가 검색어와 관련이 있는지 판단하는 것보다 관련 결과 집합 중에서 특정 사용자에게 가장 눈에 띄게 표시되어야 하는 페이지를 결정하는 것입니다. 이를 수행하는 한 가지 방법은 먼저 세트의 모든 요소에 점수를 할당한 다음 최고 등급 요소를 검색하는 것입니다. Google 검색 엔진의 원래 비밀 소스인 PageRank는 이러한 점수 시스템의 초기 예였습니다. 이상하게도 PageRank에서 제공하는 점수는 실제 쿼리에 의존하지 않았습니다. 대신 간단한 관련성 필터를 사용하여 관련성 있는 후보 집합을 식별한 다음 PageRank를 사용하여 더 권위 있는 페이지의 우선 순위를 지정했습니다. 요즘 검색 엔진은 기계 학습 및 행동 모델을 사용하여 쿼리에 따른 관련성 점수를 얻습니다. 이 주제에 전념하는 전체 학술 회의가 있습니다.

1.3.1.5. Recommender Systems

Recommender systems are another problem setting that is related to search and ranking. The problems are similar insofar as the goal is to display a set of items relevant to the user. The main difference is the emphasis on personalization to specific users in the context of recommender systems. For instance, for movie recommendations, the results page for a science fiction fan and the results page for a connoisseur of Peter Sellers comedies might differ significantly. Similar problems pop up in other recommendation settings, e.g., for retail products, music, and news recommendation.

추천 시스템은 검색 및 순위와 관련된 또 다른 문제 설정입니다. 사용자와 관련된 일련의 항목을 표시하는 것이 목표라는 점에서 문제는 유사합니다. 주요 차이점은 추천 시스템의 맥락에서 특정 사용자에 대한 개인화를 강조한다는 것입니다. 예를 들어 영화 추천의 경우 SF 팬의 결과 페이지와 Peter Sellers 코미디 감정가의 결과 페이지가 크게 다를 수 있습니다. 소매 제품, 음악, 뉴스 추천과 같은 다른 추천 설정에서도 비슷한 문제가 나타납니다.

In some cases, customers provide explicit feedback, communicating how much they liked a particular product (e.g., the product ratings and reviews on Amazon, IMDb, or Goodreads). In other cases, they provide implicit feedback, e.g., by skipping titles on a playlist, which might indicate dissatisfaction or maybe just indicate that the song was inappropriate in context. In the simplest formulations, these systems are trained to estimate some score, such as an expected star rating or the probability that a given user will purchase a particular item.

어떤 경우에는 고객이 특정 제품을 얼마나 좋아하는지 알리는 명시적인 피드백을 제공합니다(예: Amazon, IMDb 또는 Goodreads의 제품 평가 및 리뷰). 다른 경우에는 재생 목록의 제목을 건너뛰는 등 암시적인 피드백을 제공하는데, 이는 불만족을 나타내거나 노래가 상황에 부적절하다는 것을 나타낼 수도 있습니다. 가장 간단한 공식에서 이러한 시스템은 예상 별점이나 특정 사용자가 특정 항목을 구매할 확률과 같은 일부 점수를 추정하도록 훈련되었습니다.

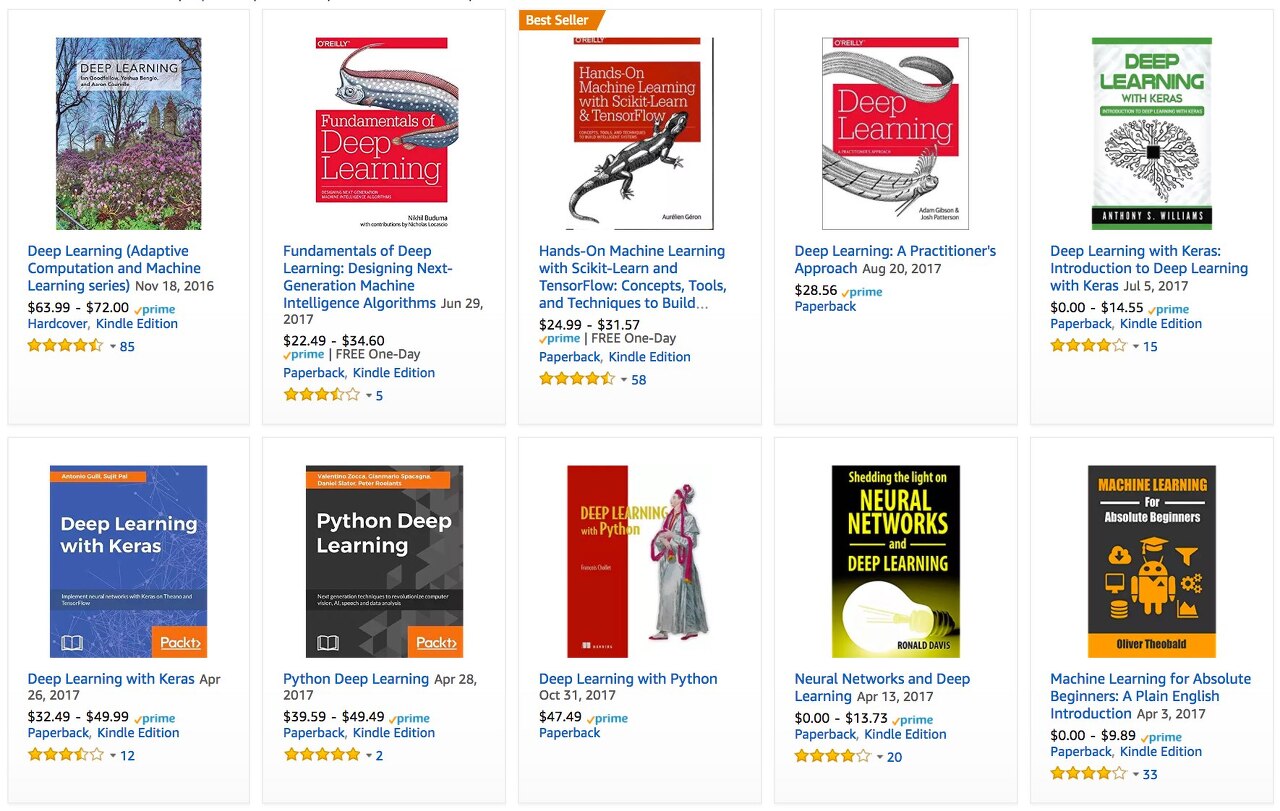

Given such a model, for any given user, we could retrieve the set of objects with the largest scores, which could then be recommended to the user. Production systems are considerably more advanced and take detailed user activity and item characteristics into account when computing such scores. Fig. 1.3.4 displays the deep learning books recommended by Amazon based on personalization algorithms tuned to capture Aston’s preferences.

그러한 모델이 주어지면 특정 사용자에 대해 가장 높은 점수를 가진 객체 세트를 검색할 수 있으며 이를 사용자에게 추천할 수 있습니다. 생산 시스템은 훨씬 더 발전되었으며 이러한 점수를 계산할 때 상세한 사용자 활동과 항목 특성을 고려합니다. 그림 1.3.4는 Aston의 선호도를 포착하도록 조정된 개인화 알고리즘을 기반으로 Amazon에서 추천하는 딥러닝 도서를 표시합니다.

Despite their tremendous economic value, recommender systems naively built on top of predictive models suffer some serious conceptual flaws. To start, we only observe censored feedback: users preferentially rate movies that they feel strongly about. For example, on a five-point scale, you might notice that items receive many one- and five-star ratings but that there are conspicuously few three-star ratings. Moreover, current purchase habits are often a result of the recommendation algorithm currently in place, but learning algorithms do not always take this detail into account. Thus it is possible for feedback loops to form where a recommender system preferentially pushes an item that is then taken to be better (due to greater purchases) and in turn is recommended even more frequently. Many of these problems—about how to deal with censoring, incentives, and feedback loops—are important open research questions.

엄청난 경제적 가치에도 불구하고 예측 모델 위에 순진하게 구축된 추천 시스템은 몇 가지 심각한 개념적 결함을 안고 있습니다. 우선 우리는 검열된 피드백만 관찰합니다. 사용자는 자신이 강하게 느끼는 영화를 우선적으로 평가합니다. 예를 들어, 5점 척도에서 항목이 별 1개 및 5개 등급을 많이 받았지만 별 3개 등급은 눈에 띄게 적다는 것을 알 수 있습니다. 더욱이 현재의 구매 습관은 현재 시행 중인 추천 알고리즘의 결과인 경우가 많지만, 학습 알고리즘이 항상 이러한 세부 사항을 고려하는 것은 아닙니다. 따라서 추천 시스템이 항목을 우선적으로 푸시한 후 더 나은 것으로 간주되고(구매 증가로 인해) 더 자주 추천되는 피드백 루프가 형성될 수 있습니다. 검열, 인센티브 및 피드백 루프를 처리하는 방법에 관한 이러한 문제 중 상당수는 중요한 공개 연구 질문입니다.

1.3.1.6. Sequence Learning

So far, we have looked at problems where we have some fixed number of inputs and produce a fixed number of outputs. For example, we considered predicting house prices given a fixed set of features: square footage, number of bedrooms, number of bathrooms, and the transit time to downtown. We also discussed mapping from an image (of fixed dimension) to the predicted probabilities that it belongs to each among a fixed number of classes and predicting star ratings associated with purchases based on the user ID and product ID alone. In these cases, once our model is trained, after each test example is fed into our model, it is immediately forgotten. We assumed that successive observations were independent and thus there was no need to hold on to this context.

지금까지 우리는 고정된 수의 입력이 있고 고정된 수의 출력을 생성하는 문제를 살펴보았습니다. 예를 들어 우리는 면적, 침실 수, 욕실 수, 시내까지의 이동 시간 등 고정된 특성 세트를 바탕으로 주택 가격을 예측하는 것을 고려했습니다. 또한 (고정 차원의) 이미지를 고정된 수의 클래스 중 각각에 속하는 예측 확률로 매핑하고 사용자 ID와 제품 ID만을 기반으로 구매와 관련된 별점 예측에 대해 논의했습니다. 이러한 경우 모델이 훈련되고 나면 각 테스트 예제가 모델에 입력된 후 즉시 잊어버립니다. 우리는 연속적인 관찰이 독립적이므로 이러한 맥락을 붙잡을 필요가 없다고 가정했습니다.

But how should we deal with video snippets? In this case, each snippet might consist of a different number of frames. And our guess of what is going on in each frame might be much stronger if we take into account the previous or succeeding frames. The same goes for language. For example, one popular deep learning problem is machine translation: the task of ingesting sentences in some source language and predicting their translations in another language.

하지만 비디오 스니펫을 어떻게 처리해야 할까요? 이 경우 각 조각은 서로 다른 수의 프레임으로 구성될 수 있습니다. 그리고 각 프레임에서 무슨 일이 일어나고 있는지에 대한 우리의 추측은 이전 또는 후속 프레임을 고려하면 훨씬 더 강력해질 수 있습니다. 언어도 마찬가지다. 예를 들어, 인기 있는 딥 러닝 문제 중 하나는 기계 번역입니다. 즉, 일부 소스 언어로 문장을 수집하고 다른 언어로 번역을 예측하는 작업입니다.

Such problems also occur in medicine. We might want a model to monitor patients in the intensive care unit and to fire off alerts whenever their risk of dying in the next 24 hours exceeds some threshold. Here, we would not throw away everything that we know about the patient history every hour, because we might not want to make predictions based only on the most recent measurements.

이러한 문제는 의학에서도 발생합니다. 우리는 중환자실에 있는 환자를 모니터링하고 향후 24시간 이내에 사망 위험이 특정 임계값을 초과할 때마다 경고를 울리는 모델을 원할 수 있습니다. 여기서는 매시간 환자 이력에 대해 알고 있는 모든 정보를 버리지 않을 것입니다. 왜냐하면 가장 최근의 측정값만을 기반으로 예측을 하고 싶지 않을 수도 있기 때문입니다.

Questions like these are among the most exciting applications of machine learning and they are instances of sequence learning. They require a model either to ingest sequences of inputs or to emit sequences of outputs (or both). Specifically, sequence-to-sequence learning considers problems where both inputs and outputs consist of variable-length sequences. Examples include machine translation and speech-to-text transcription. While it is impossible to consider all types of sequence transformations, the following special cases are worth mentioning.

이와 같은 질문은 기계 학습의 가장 흥미로운 응용 프로그램 중 하나이며 시퀀스 학습의 예입니다. 입력 시퀀스를 수집하거나 출력 시퀀스(또는 둘 다)를 내보내는 모델이 필요합니다. 특히, 시퀀스 간 학습은 입력과 출력이 모두 가변 길이 시퀀스로 구성된 문제를 고려합니다. 예로는 기계 번역, 음성-텍스트 변환 등이 있습니다. 모든 유형의 시퀀스 변환을 고려하는 것은 불가능하지만 다음과 같은 특별한 경우는 언급할 가치가 있습니다.

Tagging and Parsing. This involves annotating a text sequence with attributes. Here, the inputs and outputs are aligned, i.e., they are of the same number and occur in a corresponding order. For instance, in part-of-speech (PoS) tagging, we annotate every word in a sentence with the corresponding part of speech, i.e., “noun” or “direct object”. Alternatively, we might want to know which groups of contiguous words refer to named entities, like people, places, or organizations. In the cartoonishly simple example below, we might just want to indicate whether or not any word in the sentence is part of a named entity (tagged as “Ent”).

태그 지정 및 구문 분석. 여기에는 속성으로 텍스트 시퀀스에 주석을 추가하는 작업이 포함됩니다. 여기서 입력과 출력은 정렬됩니다. 즉, 동일한 수를 가지며 해당 순서로 발생합니다. 예를 들어, 품사(PoS) 태깅에서는 문장의 모든 단어에 해당 품사(예: "명사" 또는 "직접 목적어")를 주석으로 추가합니다. 또는 사람, 장소 또는 조직과 같은 명명된 엔터티를 나타내는 연속 단어 그룹이 무엇인지 알고 싶을 수도 있습니다. 아래의 만화처럼 간단한 예에서는 문장의 단어가 명명된 엔터티("Ent"로 태그 지정됨)의 일부인지 여부를 표시하고 싶을 수도 있습니다.

Tom has dinner in Washington with Sally

Ent - - - Ent - Ent

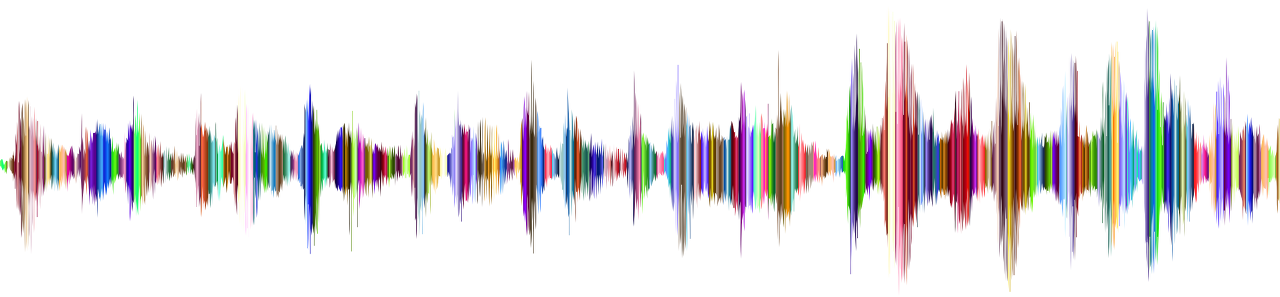

Automatic Speech Recognition. With speech recognition, the input sequence is an audio recording of a speaker (Fig. 1.3.5), and the output is a transcript of what the speaker said. The challenge is that there are many more audio frames (sound is typically sampled at 8kHz or 16kHz) than text, i.e., there is no 1:1 correspondence between audio and text, since thousands of samples may correspond to a single spoken word. These are sequence-to-sequence learning problems, where the output is much shorter than the input. While humans are remarkably good at recognizing speech, even from low-quality audio, getting computers to perform the same feat is a formidable challenge.

자동 음성 인식. 음성 인식의 경우 입력 시퀀스는 화자의 오디오 녹음(그림 1.3.5)이고 출력은 화자가 말한 내용의 사본입니다. 문제는 텍스트보다 더 많은 오디오 프레임(사운드는 일반적으로 8kHz 또는 16kHz에서 샘플링됨)이 있다는 것입니다. 즉, 수천 개의 샘플이 단일 음성 단어에 해당할 수 있으므로 오디오와 텍스트 사이에 1:1 대응이 없다는 것입니다. 이는 출력이 입력보다 훨씬 짧은 시퀀스 간 학습 문제입니다. 인간은 품질이 낮은 오디오에서도 음성을 인식하는 데 놀라울 정도로 뛰어나지만, 컴퓨터가 동일한 기능을 수행하도록 하는 것은 엄청난 도전입니다.

Text to Speech. This is the inverse of automatic speech recognition. Here, the input is text and the output is an audio file. In this case, the output is much longer than the input.

텍스트 음성 변환. 이는 자동 음성 인식의 반대입니다. 여기서 입력은 텍스트이고 출력은 오디오 파일입니다. 이 경우 출력은 입력보다 훨씬 깁니다.

Machine Translation. Unlike the case of speech recognition, where corresponding inputs and outputs occur in the same order, in machine translation, unaligned data poses a new challenge. Here the input and output sequences can have different lengths, and the corresponding regions of the respective sequences may appear in a different order. Consider the following illustrative example of the peculiar tendency of Germans to place the verbs at the end of sentences:

기계 번역. 해당 입력과 출력이 동일한 순서로 발생하는 음성 인식의 경우와 달리 기계 번역에서는 정렬되지 않은 데이터가 새로운 과제를 제기합니다. 여기서, 입력 시퀀스와 출력 시퀀스는 서로 다른 길이를 가질 수 있으며, 각 시퀀스의 해당 영역이 서로 다른 순서로 나타날 수 있다. 문장 끝에 동사를 배치하는 독일인의 독특한 경향을 보여주는 다음 예시를 고려해 보세요.

German: Haben Sie sich schon dieses grossartige Lehrwerk angeschaut?

English: Have you already looked at this excellent textbook?

Wrong alignment: Have you yourself already this excellent textbook looked at?

Many related problems pop up in other learning tasks. For instance, determining the order in which a user reads a webpage is a two-dimensional layout analysis problem. Dialogue problems exhibit all kinds of additional complications, where determining what to say next requires taking into account real-world knowledge and the prior state of the conversation across long temporal distances. Such topics are active areas of research.

다른 학습 과제에서도 많은 관련 문제가 나타납니다. 예를 들어, 사용자가 웹페이지를 읽는 순서를 결정하는 것은 2차원 레이아웃 분석 문제입니다. 대화 문제는 온갖 종류의 추가적인 복잡성을 나타냅니다. 여기서 다음에 말할 내용을 결정하려면 실제 지식과 긴 시간적 거리에 걸친 대화의 이전 상태를 고려해야 합니다. 이러한 주제는 활발한 연구 분야입니다.

1.3.2. Unsupervised and Self-Supervised Learning

The previous examples focused on supervised learning, where we feed the model a giant dataset containing both the features and corresponding label values. You could think of the supervised learner as having an extremely specialized job and an extremely dictatorial boss. The boss stands over the learner’s shoulder and tells them exactly what to do in every situation until they learn to map from situations to actions. Working for such a boss sounds pretty lame. On the other hand, pleasing such a boss is pretty easy. You just recognize the pattern as quickly as possible and imitate the boss’s actions.

이전 예제에서는 지도 학습에 중점을 두었습니다. 여기서는 특징과 해당 레이블 값을 모두 포함하는 거대한 데이터 세트를 모델에 제공합니다. 지도 학습자는 극도로 전문화된 직업과 극도로 독재적인 상사를 갖고 있다고 생각할 수 있습니다. 상사는 학습자의 어깨 너머에 서서 그들이 상황에서 행동으로 매핑하는 방법을 배울 때까지 모든 상황에서 무엇을 해야 하는지 정확하게 알려줍니다. 그런 상사 밑에서 일하는 것은 꽤 형편없는 것처럼 들립니다. 반면에 그런 상사를 기쁘게 하는 것은 꽤 쉽습니다. 최대한 빨리 패턴을 인식하고 상사의 행동을 모방하면 됩니다.

Considering the opposite situation, it could be frustrating to work for a boss who has no idea what they want you to do. However, if you plan to be a data scientist, you had better get used to it. The boss might just hand you a giant dump of data and tell you to do some data science with it! This sounds vague because it is vague. We call this class of problems unsupervised learning, and the type and number of questions we can ask is limited only by our creativity. We will address unsupervised learning techniques in later chapters. To whet your appetite for now, we describe a few of the following questions you might ask.

반대의 상황을 고려하면, 자신이 무엇을 하기를 원하는지 전혀 모르는 상사 밑에서 일하는 것은 좌절스러울 수 있습니다. 하지만 데이터 과학자가 될 계획이라면 익숙해지는 것이 좋습니다. 상사는 당신에게 엄청난 양의 데이터를 건네주고 그걸로 데이터 과학을 하라고 말할 수도 있습니다! 모호하기 때문에 모호하게 들립니다. 우리는 이런 종류의 문제를 비지도 학습이라고 부르며, 우리가 물어볼 수 있는 질문의 유형과 수는 우리의 창의성에 의해서만 제한됩니다. 우리는 이후 장에서 비지도 학습 기술을 다룰 것입니다. 지금은 귀하의 식욕을 자극하기 위해 귀하가 물어볼 수 있는 다음 질문 중 몇 가지를 설명합니다.

- Can we find a small number of prototypes that accurately summarize the data? Given a set of photos, can we group them into landscape photos, pictures of dogs, babies, cats, and mountain peaks? Likewise, given a collection of users’ browsing activities, can we group them into users with similar behavior? This problem is typically known as clustering.

데이터를 정확하게 요약하는 소수의 프로토타입을 찾을 수 있습니까? 주어진 사진 세트를 풍경 사진, 개 사진, 아기 사진, 고양이 사진, 산봉우리 사진으로 그룹화할 수 있나요? 마찬가지로, 사용자의 탐색 활동 모음을 바탕으로 유사한 행동을 하는 사용자로 그룹화할 수 있습니까? 이 문제는 일반적으로 클러스터링으로 알려져 있습니다. - Can we find a small number of parameters that accurately capture the relevant properties of the data? The trajectories of a ball are well described by velocity, diameter, and mass of the ball. Tailors have developed a small number of parameters that describe human body shape fairly accurately for the purpose of fitting clothes. These problems are referred to as subspace estimation. If the dependence is linear, it is called principal component analysis.

데이터의 관련 속성을 정확하게 포착하는 소수의 매개변수를 찾을 수 있습니까? 공의 궤적은 공의 속도, 직경, 질량으로 잘 설명됩니다. 재단사는 옷을 맞추는 목적으로 인체 형태를 아주 정확하게 묘사하는 소수의 매개변수를 개발했습니다. 이러한 문제를 부분공간 추정이라고 합니다. 종속성이 선형인 경우 이를 주성분 분석이라고 합니다. - Is there a representation of (arbitrarily structured) objects in Euclidean space such that symbolic properties can be well matched? This can be used to describe entities and their relations, such as “Rome” − “Italy” + “France” = “Paris”.

상징적 속성이 잘 일치할 수 있도록 유클리드 공간에 (임의로 구조화된) 객체의 표현이 있습니까? 이는 "로마" − "이탈리아" + "프랑스" = "파리"와 같이 엔터티와 해당 관계를 설명하는 데 사용할 수 있습니다. - Is there a description of the root causes of much of the data that we observe? For instance, if we have demographic data about house prices, pollution, crime, location, education, and salaries, can we discover how they are related simply based on empirical data? The fields concerned with causality and probabilistic graphical models tackle such questions.

우리가 관찰하는 많은 데이터의 근본 원인에 대한 설명이 있습니까? 예를 들어 주택 가격, 오염, 범죄, 위치, 교육, 급여 등에 대한 인구통계학적 데이터가 있다면, 단순히 실증적 데이터를 기반으로 이들이 어떻게 연관되어 있는지 알아낼 수 있을까요? 인과관계 및 확률적 그래픽 모델과 관련된 분야에서는 이러한 질문을 다룹니다. - Another important and exciting recent development in unsupervised learning is the advent of deep generative models. These models estimate the density of the data, either explicitly or implicitly. Once trained, we can use a generative model either to score examples according to how likely they are, or to sample synthetic examples from the learned distribution. Early deep learning breakthroughs in generative modeling came with the invention of variational autoencoders (Kingma and Welling, 2014, Rezende et al., 2014) and continued with the development of generative adversarial networks (Goodfellow et al., 2014). More recent advances include normalizing flows (Dinh et al., 2014, Dinh et al., 2017) and diffusion models (Ho et al., 2020, Sohl-Dickstein et al., 2015, Song and Ermon, 2019, Song et al., 2021).

비지도 학습의 또 다른 중요하고 흥미로운 최근 발전은 심층 생성 모델의 출현입니다. 이러한 모델은 명시적으로 또는 암시적으로 데이터의 밀도를 추정합니다. 훈련이 완료되면 생성 모델을 사용하여 가능성에 따라 예제의 점수를 매기거나 학습된 분포에서 합성 예제를 샘플링할 수 있습니다. 생성 모델링의 초기 딥 러닝 혁신은 Variational Autoencoder(Kingma and Welling, 2014, Rezende et al., 2014)의 발명과 함께 이루어졌으며 생성적 적대 네트워크(Goodfellow et al., 2014)의 개발이 계속되었습니다. 보다 최근의 발전에는 흐름 정규화(Dinh et al., 2014, Dinh et al., 2017) 및 확산 모델(Ho et al., 2020, Sohl-Dickstein et al., 2015, Song and Ermon, 2019, Song et al. ., 2021).

A further development in unsupervised learning has been the rise of self-supervised learning, techniques that leverage some aspect of the unlabeled data to provide supervision. For text, we can train models to “fill in the blanks” by predicting randomly masked words using their surrounding words (contexts) in big corpora without any labeling effort (Devlin et al., 2018)! For images, we may train models to tell the relative position between two cropped regions of the same image (Doersch et al., 2015), to predict an occluded part of an image based on the remaining portions of the image, or to predict whether two examples are perturbed versions of the same underlying image. Self-supervised models often learn representations that are subsequently leveraged by fine-tuning the resulting models on some downstream task of interest.

비지도 학습의 추가적인 발전은 감독을 제공하기 위해 레이블이 지정되지 않은 데이터의 일부 측면을 활용하는 기술인 자기 지도 학습의 등장입니다. 텍스트의 경우 라벨링 작업 없이 큰 말뭉치에서 주변 단어(컨텍스트)를 사용하여 무작위로 마스크된 단어를 예측하여 "빈칸을 채우도록" 모델을 훈련할 수 있습니다(Devlin et al., 2018)! 이미지의 경우 동일한 이미지의 잘린 두 영역 사이의 상대적 위치를 알려주거나(Doersch et al., 2015), 이미지의 나머지 부분을 기반으로 이미지의 가려진 부분을 예측하거나, 두 가지 예는 동일한 기본 이미지의 교란된 버전입니다. 자기 지도 모델은 관심 있는 일부 다운스트림 작업에서 결과 모델을 미세 조정하여 이후에 활용되는 표현을 학습하는 경우가 많습니다.

1.3.3. Interacting with an Environment

So far, we have not discussed where data actually comes from, or what actually happens when a machine learning model generates an output. That is because supervised learning and unsupervised learning do not address these issues in a very sophisticated way. In each case, we grab a big pile of data upfront, then set our pattern recognition machines in motion without ever interacting with the environment again. Because all the learning takes place after the algorithm is disconnected from the environment, this is sometimes called offline learning. For example, supervised learning assumes the simple interaction pattern depicted in Fig. 1.3.6.

지금까지 우리는 데이터가 실제로 어디에서 오는지 또는 기계 학습 모델이 출력을 생성할 때 실제로 어떤 일이 발생하는지 논의하지 않았습니다. 지도 학습과 비지도 학습은 매우 정교한 방식으로 이러한 문제를 해결하지 못하기 때문입니다. 각각의 경우에 우리는 대량의 데이터를 미리 수집한 다음 환경과 다시 상호 작용하지 않고 패턴 인식 기계를 작동하도록 설정합니다. 모든 학습은 알고리즘이 환경과 분리된 후에 이루어지기 때문에 이를 오프라인 학습이라고도 합니다. 예를 들어, 지도 학습은 그림 1.3.6에 묘사된 단순한 상호 작용 패턴을 가정합니다.

This simplicity of offline learning has its charms. The upside is that we can worry about pattern recognition in isolation, with no concern about complications arising from interactions with a dynamic environment. But this problem formulation is limiting. If you grew up reading Asimov’s Robot novels, then you probably picture artificially intelligent agents capable not only of making predictions, but also of taking actions in the world. We want to think about intelligent agents, not just predictive models. This means that we need to think about choosing actions, not just making predictions. In contrast to mere predictions, actions actually impact the environment. If we want to train an intelligent agent, we must account for the way its actions might impact the future observations of the agent, and so offline learning is inappropriate.

이러한 오프라인 학습의 단순함은 매력이 있습니다. 장점은 동적 환경과의 상호 작용으로 인해 발생하는 합병증에 대한 걱정 없이 패턴 인식만 따로 걱정할 수 있다는 것입니다. 그러나 이 문제 공식화는 제한적입니다. Asimov의 로봇 소설을 읽으며 자랐다면 아마도 예측을 할 수 있을 뿐만 아니라 세상에서 행동을 취할 수도 있는 인공지능 에이전트를 떠올릴 것입니다. 우리는 단순한 예측 모델이 아닌 지능형 에이전트에 대해 생각하고 싶습니다. 이는 단순히 예측을 하는 것이 아니라 행동을 선택하는 것에 대해 생각해야 한다는 것을 의미합니다. 단순한 예측과 달리 행동은 실제로 환경에 영향을 미칩니다. 지능형 에이전트를 훈련하려면 에이전트의 행동이 에이전트의 향후 관찰에 영향을 미칠 수 있는 방식을 고려해야 하므로 오프라인 학습은 부적절합니다.

Considering the interaction with an environment opens a whole set of new modeling questions. The following are just a few examples.

환경과의 상호 작용을 고려하면 완전히 새로운 모델링 문제가 발생합니다. 다음은 몇 가지 예입니다.

- Does the environment remember what we did previously?

- 환경은 우리가 이전에 한 일을 기억합니까?

- Does the environment want to help us, e.g., a user reading text into a speech recognizer?

- 예를 들어 사용자가 음성 인식기로 텍스트를 읽는 것과 같이 환경이 우리를 돕고 싶어합니까?

- Does the environment want to beat us, e.g., spammers adapting their emails to evade spam filters?

- 환경이 우리를 이기기를 원합니까? 예를 들어 스팸 필터를 회피하기 위해 이메일을 조정하는 스패머가 있습니까?

- Does the environment have shifting dynamics? For example, would future data always resemble the past or would the patterns change over time, either naturally or in response to our automated tools?

- 환경에 변화하는 역학이 있습니까? 예를 들어, 미래의 데이터는 항상 과거와 유사할까요, 아니면 자연적으로 또는 자동화된 도구에 반응하여 시간이 지남에 따라 패턴이 변경됩니까?

These questions raise the problem of distribution shift, where training and test data are different. An example of this, that many of us may have met, is when taking exams written by a lecturer, while the homework was composed by their teaching assistants. Next, we briefly describe reinforcement learning, a rich framework for posing learning problems in which an agent interacts with an environment.

이러한 질문은 훈련 데이터와 테스트 데이터가 다른 분포 이동 문제를 제기합니다. 우리 중 많은 사람들이 접했을 수 있는 이에 대한 예는 강사가 작성한 시험을 치르고 숙제는 조교가 작성하는 경우입니다. 다음으로 에이전트가 환경과 상호 작용하는 학습 문제를 제기하기 위한 풍부한 프레임워크인 강화 학습에 대해 간략하게 설명합니다.

1.3.4. Reinforcement Learning

If you are interested in using machine learning to develop an agent that interacts with an environment and takes actions, then you are probably going to wind up focusing on reinforcement learning. This might include applications to robotics, to dialogue systems, and even to developing artificial intelligence (AI) for video games. Deep reinforcement learning, which applies deep learning to reinforcement learning problems, has surged in popularity. The breakthrough deep Q-network, that beat humans at Atari games using only the visual input (Mnih et al., 2015), and the AlphaGo program, which dethroned the world champion at the board game Go (Silver et al., 2016), are two prominent examples.

기계 학습을 사용하여 환경과 상호 작용하고 조치를 취하는 에이전트를 개발하는 데 관심이 있다면 아마도 강화 학습에 집중하게 될 것입니다. 여기에는 로봇 공학, 대화 시스템, 심지어 비디오 게임용 인공 지능(AI) 개발에 대한 애플리케이션이 포함될 수 있습니다. 강화학습 문제에 딥러닝을 적용한 심층강화학습이 인기를 끌었습니다. 시각적 입력만을 사용하여 Atari 게임에서 인간을 이기는 획기적인 심층 Q 네트워크(Mnih et al., 2015) 및 보드 게임 바둑에서 세계 챔피언을 물리친 AlphaGo 프로그램(Silver et al., 2016) , 두 가지 대표적인 예입니다.

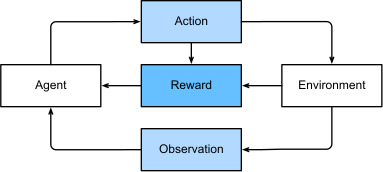

Reinforcement learning gives a very general statement of a problem in which an agent interacts with an environment over a series of time steps. At each time step, the agent receives some observation from the environment and must choose an action that is subsequently transmitted back to the environment via some mechanism (sometimes called an actuator), when, after each loop, the agent receives a reward from the environment. This process is illustrated in Fig. 1.3.7. The agent then receives a subsequent observation, and chooses a subsequent action, and so on. The behavior of a reinforcement learning agent is governed by a policy. In brief, a policy is just a function that maps from observations of the environment to actions. The goal of reinforcement learning is to produce good policies.

강화 학습은 에이전트가 일련의 시간 단계에 걸쳐 환경과 상호 작용하는 문제에 대한 매우 일반적인 설명을 제공합니다. 각 단계에서 에이전트는 환경으로부터 일부 관찰을 받고 이후에 에이전트가 각 루프 후에 환경으로부터 보상을 받을 때 일부 메커니즘(액추에이터라고도 함)을 통해 환경으로 다시 전송되는 작업을 선택해야 합니다. . 이 프로세스는 그림 1.3.7에 설명되어 있습니다. 그런 다음 에이전트는 후속 관찰을 수신하고 후속 조치를 선택하는 등의 작업을 수행합니다. 강화 학습 에이전트의 동작은 정책에 따라 결정됩니다. 간단히 말해서, 정책은 환경 관찰을 행동으로 연결하는 기능일 뿐입니다. 강화학습의 목표는 좋은 정책을 만드는 것입니다.

It is hard to overstate the generality of the reinforcement learning framework. For example, supervised learning can be recast as reinforcement learning. Say we had a classification problem. We could create a reinforcement learning agent with one action corresponding to each class. We could then create an environment which gave a reward that was exactly equal to the loss function from the original supervised learning problem.

강화학습 프레임워크의 일반성을 과장하기는 어렵습니다. 예를 들어 지도 학습은 강화 학습으로 재구성될 수 있습니다. 분류 문제가 있다고 가정해 보겠습니다. 각 클래스에 해당하는 하나의 작업으로 강화 학습 에이전트를 만들 수 있습니다. 그런 다음 원래 지도 학습 문제의 손실 함수와 정확히 동일한 보상을 제공하는 환경을 만들 수 있습니다.

Further, reinforcement learning can also address many problems that supervised learning cannot. For example, in supervised learning, we always expect that the training input comes associated with the correct label. But in reinforcement learning, we do not assume that, for each observation the environment tells us the optimal action. In general, we just get some reward. Moreover, the environment may not even tell us which actions led to the reward.

또한 강화 학습은 지도 학습이 해결할 수 없는 많은 문제를 해결할 수도 있습니다. 예를 들어 지도 학습에서는 항상 훈련 입력이 올바른 라벨과 연결될 것으로 기대합니다. 그러나 강화 학습에서는 각 관찰에 대해 환경이 최적의 행동을 알려준다고 가정하지 않습니다. 일반적으로 우리는 약간의 보상만 받습니다. 더욱이, 환경은 어떤 행동이 보상으로 이어졌는지조차 말해주지 않을 수도 있습니다.

Consider the game of chess. The only real reward signal comes at the end of the game when we either win, earning a reward of, say, 1, or when we lose, receiving a reward of, say, −1. So reinforcement learners must deal with the credit assignment problem: determining which actions to credit or blame for an outcome. The same goes for an employee who gets a promotion on October 11. That promotion likely reflects a number of well-chosen actions over the previous year. Getting promoted in the future requires figuring out which actions along the way led to the earlier promotions.

체스 게임을 생각해 보십시오. 유일한 실제 보상 신호는 게임이 끝날 때 우리가 승리하여 가령 1의 보상을 얻거나, 패배할 때 가령 -1의 보상을 받을 때 나타납니다. 따라서 강화 학습자는 점수 할당 문제, 즉 결과에 대해 어떤 행동을 인정하거나 비난할지 결정하는 문제를 처리해야 합니다. 10월 11일에 승진한 직원의 경우에도 마찬가지입니다. 해당 승진은 전년도에 잘 선택된 여러 가지 조치를 반영한 것 같습니다. 미래에 승진하려면 그 과정에서 어떤 행동이 이전 승진으로 이어졌는지 파악해야 합니다.

Reinforcement learners may also have to deal with the problem of partial observability. That is, the current observation might not tell you everything about your current state. Say your cleaning robot found itself trapped in one of many identical closets in your house. Rescuing the robot involves inferring its precise location which might require considering earlier observations prior to it entering the closet.

강화 학습자는 부분 관찰 가능성 문제도 처리해야 할 수도 있습니다. 즉, 현재 관찰이 현재 상태에 대한 모든 것을 알려주지 못할 수도 있습니다. 청소 로봇이 집에 있는 많은 동일한 옷장 중 하나에 갇혀 있는 것을 발견했다고 가정해 보겠습니다. 로봇을 구출하려면 로봇이 옷장에 들어가기 전에 초기 관찰을 고려해야 할 정확한 위치를 추론해야 합니다.

Finally, at any given point, reinforcement learners might know of one good policy, but there might be many other better policies that the agent has never tried. The reinforcement learner must constantly choose whether to exploit the best (currently) known strategy as a policy, or to explore the space of strategies, potentially giving up some short-term reward in exchange for knowledge.

마지막으로, 특정 시점에서 강화 학습기는 하나의 좋은 정책을 알 수 있지만 에이전트가 시도한 적이 없는 다른 더 나은 정책이 많이 있을 수도 있습니다. 강화 학습자는 (현재) 가장 잘 알려진 전략을 정책으로 활용할지, 아니면 전략의 공간을 탐색할지(잠재적으로 지식의 대가로 단기 보상을 포기할지) 끊임없이 선택해야 합니다.

The general reinforcement learning problem has a very general setting. Actions affect subsequent observations. Rewards are only observed when they correspond to the chosen actions. The environment may be either fully or partially observed. Accounting for all this complexity at once may be asking too much. Moreover, not every practical problem exhibits all this complexity. As a result, researchers have studied a number of special cases of reinforcement learning problems.

일반적인 강화학습 문제는 매우 일반적인 설정을 가지고 있습니다. 행동은 후속 관찰에 영향을 미칩니다. 보상은 선택한 행동에 해당하는 경우에만 관찰됩니다. 환경은 완전히 또는 부분적으로 관찰될 수 있습니다. 이 모든 복잡성을 한꺼번에 설명하는 것은 너무 많은 것을 요구할 수 있습니다. 더욱이 모든 실제 문제가 이러한 복잡성을 모두 나타내는 것은 아닙니다. 그 결과, 연구자들은 강화 학습 문제의 특별한 사례를 많이 연구했습니다.

When the environment is fully observed, we call the reinforcement learning problem a Markov decision process. When the state does not depend on the previous actions, we call it a contextual bandit problem. When there is no state, just a set of available actions with initially unknown rewards, we have the classic multi-armed bandit problem.

환경이 완전히 관찰되면 강화 학습 문제를 마르코프 결정 프로세스라고 부릅니다. 상태가 이전 작업에 의존하지 않는 경우 이를 상황별 도적 문제라고 부릅니다. 상태가 없고 초기에 알 수 없는 보상이 있는 사용 가능한 작업 세트만 있는 경우 고전적인 다중 무장 도적 문제가 발생합니다.

1.4. Roots